7.5 KiB

pipeline_tag, tags, base_model

| pipeline_tag | tags | base_model | |||

|---|---|---|---|---|---|

| text-generation |

|

|

This is an abliterated version of OpenThinker-Agent-v1, made using Heretic v1.0.1

The quantizations were created using an imatrix merged from combined_en_medium and harmful.txt to leverage the abliterated nature of the model.

Performance

| Metric | This model | Original model |

|---|---|---|

| Refusals | 3/100 | 99/100 |

Analysis against the original model:

Detailed Analysis:

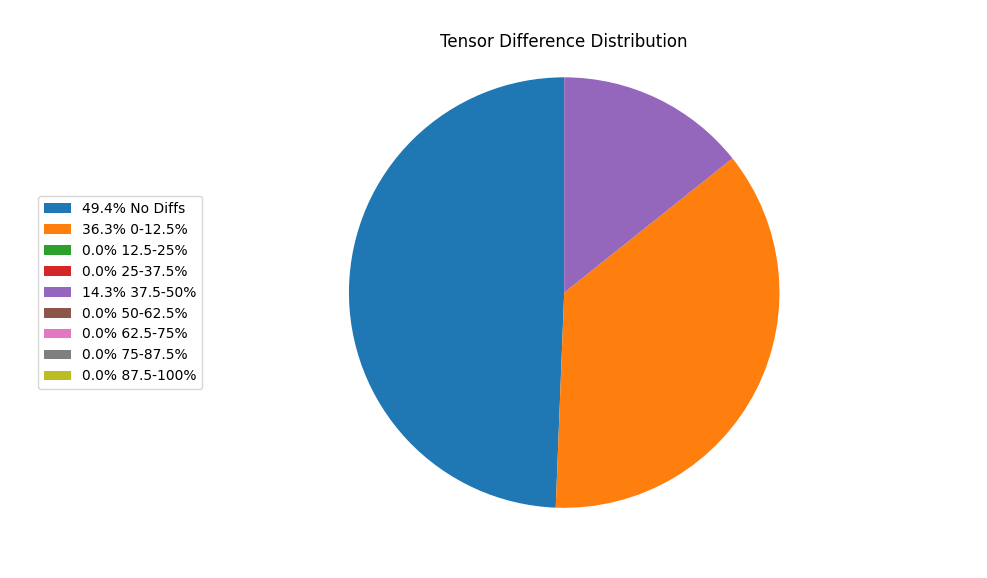

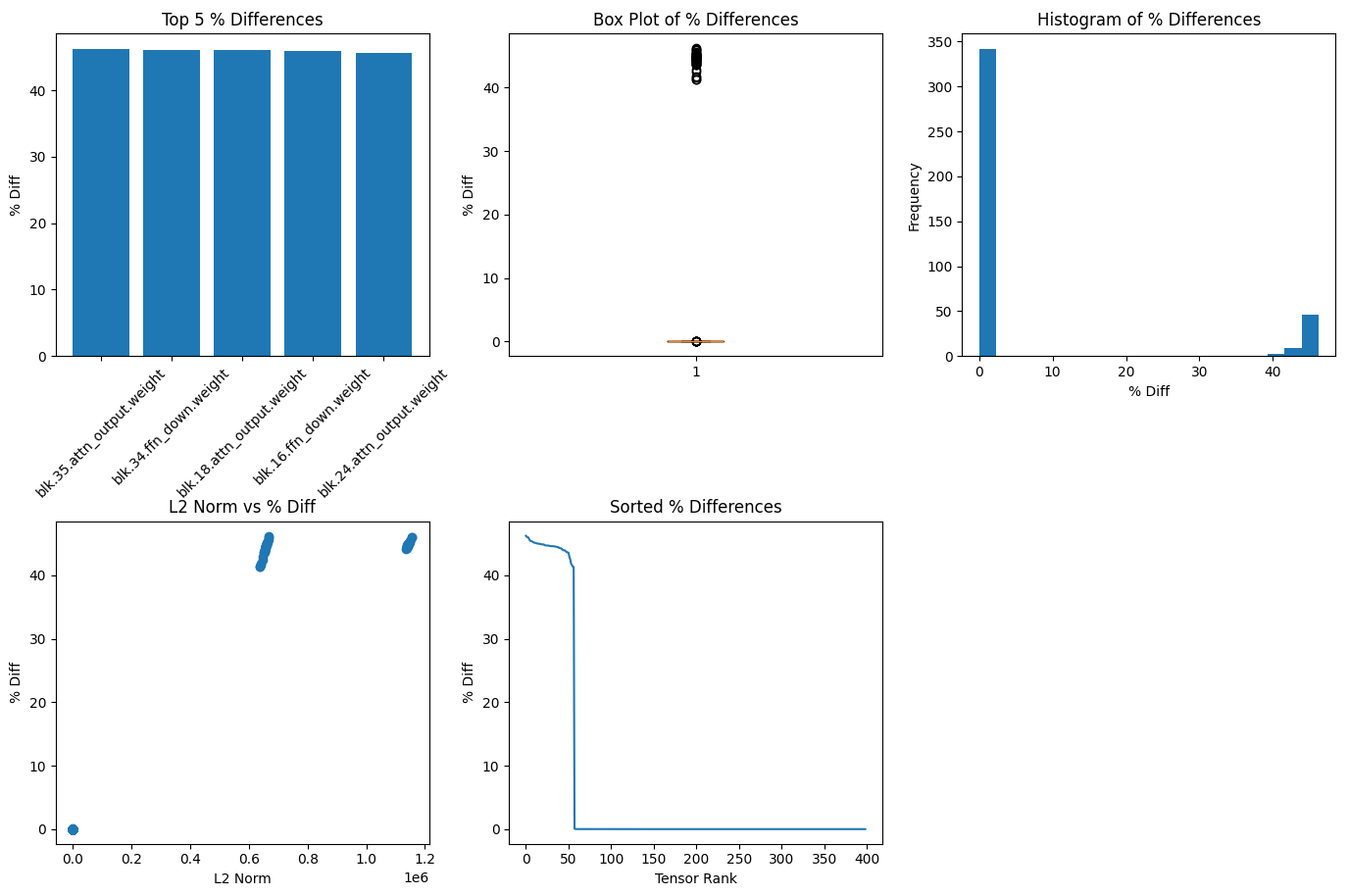

- Total Tensors: 399

- Tensors with Diffs: 202 (50.6%)

- Average % Diff: 6.35%

- Median % Diff: 0.00%

- Min/Max % Diff: 0.00% / 46.22%

- Std Dev % Diff: 15.56%

- Skewness % Diff: 2.04

- Avg L2 Norm: 125405.56

- Tensors with >5% diff: 57

- Top differences: blk.35.attn_output.weight ((4096, 8192), L2: 668013.65): 46.22% blk.34.ffn_down.weight ((4096, 24576), L2: 1155843.86): 46.07% blk.18.attn_output.weight ((4096, 8192), L2: 667142.18): 46.00% blk.16.ffn_down.weight ((4096, 24576), L2: 1154713.83): 45.95% blk.24.attn_output.weight ((4096, 8192), L2: 666019.48): 45.66%

File Comparison: File 1: Avg Abs Value = 77.9178, Deviation Score = 0.0991 File 2: Avg Abs Value = 77.9111, Deviation Score = 0.0991 Positive Diffs (File 1 > File 2): 143, Negative Diffs (File 2 > File 1): 59

BibTeX entry and citation info

@misc{heretic,

author = {Weidmann, Philipp Emanuel},

title = {Heretic: Fully automatic censorship removal for language models},

year = {2025},

publisher = {GitHub},

journal = {GitHub repository},

howpublished = {\url{https://github.com/p-e-w/heretic}}

}

Original model card:

Project | SFT dataset | RL dataset | SFT model | RL model

OpenThinker-Agent-v1

OpenThoughts-Agent is an open-source effort to curate the best datasets for training agents. Our first release includes datasets, models and our research codebase.

OpenThinker-Agent-v1 is a model trained for agentic tasks such as Terminal-Bench 2.0 and SWE-Bench.

The OpenThinker-Agent-v1 model is post-trained from Qwen/Qwen3-8B. It is SFT-ed on the OpenThoughts-Agent-v1-SFT dataset, then RL-ed on the OpenThoughts-Agent-v1-RL dataset.

This model is the final model after both SFT and RL. For the model after the SFT stage only, see OpenThinker-Agent-v1-SFT.

- Homepage: https://www.openthoughts.ai/blog/agent

- Repository: https://github.com/open-thoughts/OpenThoughts-Agent

OpenThinker-Agent-v1 Model Performance

Our OpenThinker-Agent-v1 model is the state-of-the-art model at its scale on agent benchmarks.

| Model | Harness | Terminal-Bench 2.0 | SWE-Bench Verified | OpenThoughts-TB-Dev |

|---|---|---|---|---|

| Qwen3-8B | Terminus-2 | 0.0 | 0.7 | 5.7 |

| OpenThinker-Agent-v1 | Terminus-2 | 4.9 | 15.7 | 17.3 |

| Qwen3-32B | Terminus-2 | 1.9 | 5.7 | 10.2 |

| Qwen/Qwen3-Coder-30B-A3B-Instruct | OpenHands | 10.1 | 49.2 | 24.5 |

Data

We built OpenThinker-Agent-v1 in two stages: supervised fine-tuning, followed by reinforcement learning. Each stage required its own data pipeline – RL tasks (instructions, environments, and verifiers) and SFT traces from strong teacher agents completing tasks.

OpenThoughts-Agent-v1-SFT is an SFT trace dataset containing approximately 15,200 traces drawn from two different data sources we curate:

- nl2bash: Simple synthetically generated tasks where the agent has to format shell commands effectively

- InferredBugs: A set of bugs in C# and Java collected by Microsoft that we turned into tasks

OpenThoughts-Agent-v1-RL is an RL dataset containing ~720 tasks drawn from the nl2bash verified dataset.

To stabilize training, we built a three-stage filtration pipeline that prunes tasks before they ever hit the learner:

- Bad verifiers filter: drop tasks with flaky or excessively slow verifiers.

- Environment stability: remove tasks whose containers take too long to build or tear down. Optional difficulty filter: discard tasks that even a strong model (GPT-5 Codex) cannot solve in a single pass.

Links

- 🌐 OpenThoughts-Agent project page

- 💻 OpenThoughts-Agent GitHub repository

- 🧠 OpenThoughts-Agent-v1-SFT dataset

- 🧠 OpenThoughts-Agent-v1-RL dataset

- 🧠 OpenThoughts-TB-dev dataset

- 🤖 OpenThinker-Agent-v1 model

- 🤖 OpenThinker-Agent-v1-SFT model

Citation

@misc{openthoughts-agent,

author = {Team, OpenThoughts-Agent},

month = Dec,

title = {{OpenThoughts-Agent}},

howpublished = {https://open-thoughts.ai/agent},

year = {2025}

}