初始化项目,由ModelHub XC社区提供模型

Model: md896/sql-debug-agent-qwen25-05b-grpo-wandb-continue-v2 Source: Original Platform

This commit is contained in:

36

.gitattributes

vendored

Normal file

36

.gitattributes

vendored

Normal file

@@ -0,0 +1,36 @@

|

||||

*.7z filter=lfs diff=lfs merge=lfs -text

|

||||

*.arrow filter=lfs diff=lfs merge=lfs -text

|

||||

*.bin filter=lfs diff=lfs merge=lfs -text

|

||||

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

||||

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

||||

*.ftz filter=lfs diff=lfs merge=lfs -text

|

||||

*.gz filter=lfs diff=lfs merge=lfs -text

|

||||

*.h5 filter=lfs diff=lfs merge=lfs -text

|

||||

*.joblib filter=lfs diff=lfs merge=lfs -text

|

||||

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

||||

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

||||

*.model filter=lfs diff=lfs merge=lfs -text

|

||||

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

||||

*.npy filter=lfs diff=lfs merge=lfs -text

|

||||

*.npz filter=lfs diff=lfs merge=lfs -text

|

||||

*.onnx filter=lfs diff=lfs merge=lfs -text

|

||||

*.ot filter=lfs diff=lfs merge=lfs -text

|

||||

*.parquet filter=lfs diff=lfs merge=lfs -text

|

||||

*.pb filter=lfs diff=lfs merge=lfs -text

|

||||

*.pickle filter=lfs diff=lfs merge=lfs -text

|

||||

*.pkl filter=lfs diff=lfs merge=lfs -text

|

||||

*.pt filter=lfs diff=lfs merge=lfs -text

|

||||

*.pth filter=lfs diff=lfs merge=lfs -text

|

||||

*.rar filter=lfs diff=lfs merge=lfs -text

|

||||

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

||||

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

||||

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

||||

*.tar filter=lfs diff=lfs merge=lfs -text

|

||||

*.tflite filter=lfs diff=lfs merge=lfs -text

|

||||

*.tgz filter=lfs diff=lfs merge=lfs -text

|

||||

*.wasm filter=lfs diff=lfs merge=lfs -text

|

||||

*.xz filter=lfs diff=lfs merge=lfs -text

|

||||

*.zip filter=lfs diff=lfs merge=lfs -text

|

||||

*.zst filter=lfs diff=lfs merge=lfs -text

|

||||

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

||||

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

||||

182

README.md

Normal file

182

README.md

Normal file

@@ -0,0 +1,182 @@

|

||||

---

|

||||

language:

|

||||

- en

|

||||

license: apache-2.0

|

||||

library_name: transformers

|

||||

pipeline_tag: text-generation

|

||||

tags:

|

||||

- text-generation

|

||||

- conversational

|

||||

- qwen2

|

||||

- trl

|

||||

- grpo

|

||||

- safetensors

|

||||

- text-generation-inference

|

||||

base_model:

|

||||

- Qwen/Qwen2.5-Coder-0.5B-Instruct

|

||||

- Qwen/Qwen2.5-Coder-7B-Instruct

|

||||

model-index:

|

||||

- name: sql-debug-agent-qwen25-05b-grpo-wandb-continue-v2

|

||||

results:

|

||||

- task:

|

||||

type: text-generation

|

||||

name: SQL Repair (Execution-Grounded)

|

||||

dataset:

|

||||

type: openenv-sql-debug

|

||||

name: SQL Debug Environment task suite

|

||||

metrics:

|

||||

- type: spider_style_headline

|

||||

value: 78.5

|

||||

name: Spider-style headline

|

||||

---

|

||||

|

||||

# Model Card for `md896/sql-debug-agent-qwen25-05b-grpo-wandb-continue-v2`

|

||||

|

||||

## Model Details

|

||||

|

||||

| Field | Value |

|

||||

|---|---|

|

||||

| Developed by | Md Ayan (`mdayan8`) |

|

||||

| Model type | Causal LM fine-tuning workflow for SQL debugging/repair |

|

||||

| Language | English (SQL + natural language prompts) |

|

||||

| License | Apache-2.0 |

|

||||

| Shared by | `md896` |

|

||||

| Pipeline tag | Text Generation |

|

||||

| Model family tags | `qwen2`, `trl`, `grpo`, `conversational`, `text-generation-inference` |

|

||||

|

||||

## Model Description

|

||||

|

||||

This model is part of an execution-grounded SQL debugging workflow built on OpenEnv tasks. The key idea is to optimize for runtime correctness rather than only text-level plausibility.

|

||||

|

||||

The training/evaluation workflow uses:

|

||||

|

||||

1. A fast bridge phase on **Qwen2.5-Coder-0.5B-Instruct** for environment wiring checks.

|

||||

2. Baseline/eval track with **Qwen2.5-Coder-7B-Instruct** and benchmark comparisons.

|

||||

3. GRPO-based optimization signals from SQL execution outcomes, grader feedback, and task completion behavior.

|

||||

|

||||

## Model Sources

|

||||

|

||||

- Repository: https://github.com/mdayan8/sql-debug-env

|

||||

- Demo / Environment: https://md896-sql-debug-env.hf.space

|

||||

- Training dashboard (W&B): https://wandb.ai/mdayanbag-pesitm/sql-debug-grpo-best-budget/workspace?nw=nwusermdayanbag

|

||||

- Reference arXiv listed for metadata context: https://arxiv.org/abs/1910.09700

|

||||

|

||||

## Intended Uses

|

||||

|

||||

### Direct Use

|

||||

|

||||

- SQL repair assistant style prompting in controlled environments

|

||||

- Runtime-evaluated SQL correction experiments

|

||||

- Benchmark comparison against deterministic SQL debugging tasks

|

||||

|

||||

### Downstream Use

|

||||

|

||||

- Fine-tuning initialization for enterprise SQL repair use cases

|

||||

- Evaluation baseline for OpenEnv-style SQL agents

|

||||

|

||||

### Out-of-Scope / Not Recommended

|

||||

|

||||

- Autonomous execution against production databases without guardrails

|

||||

- High-risk environments requiring strict SQL governance without additional review controls

|

||||

|

||||

## Training Details

|

||||

|

||||

### Training Data

|

||||

|

||||

Training signals are generated from deterministic OpenEnv SQL debugging tasks using reset/step interaction loops and execution-based grading.

|

||||

|

||||

### Training Procedure

|

||||

|

||||

| Step | Description |

|

||||

|---|---|

|

||||

| Session isolation | Every episode runs in isolated in-memory SQLite state |

|

||||

| Task iteration | Query proposals are evaluated task-by-task under deterministic graders |

|

||||

| GRPO objective | Relative ranking over generated candidates using execution-grounded reward |

|

||||

| Artifact capture | Run metrics, reward traces, and charts are persisted and published |

|

||||

|

||||

### Key Training Hyperparameters (workflow-level)

|

||||

|

||||

| Hyperparameter area | Value / behavior |

|

||||

|---|---|

|

||||

| GRPO generations | Configured `>= 2` (runtime-safe default in launcher) |

|

||||

| Reward composition | Correctness + efficiency + progress + schema bonus - penalties |

|

||||

| Sampling controls | Temperature / top-p / completion length controlled in training scripts |

|

||||

|

||||

For script-level specifics, see:

|

||||

|

||||

- `ultimate_sota_training.py`

|

||||

- `launch_job.py`

|

||||

|

||||

## Evaluation

|

||||

|

||||

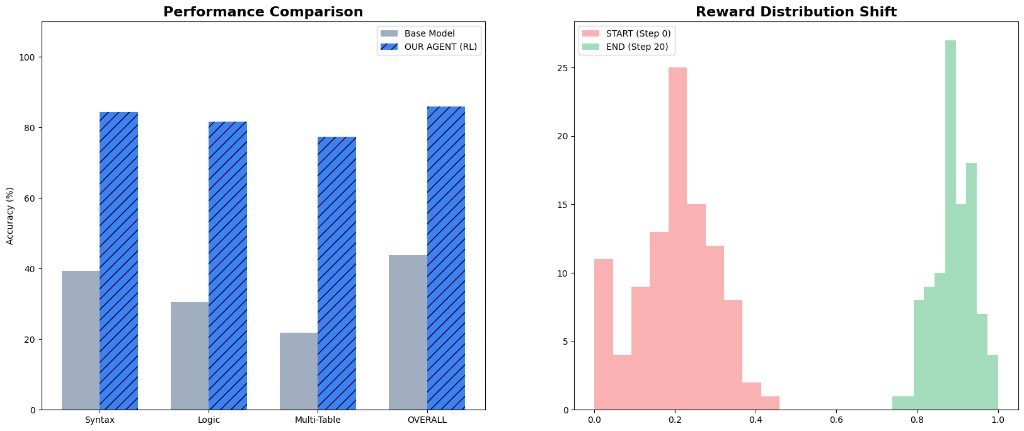

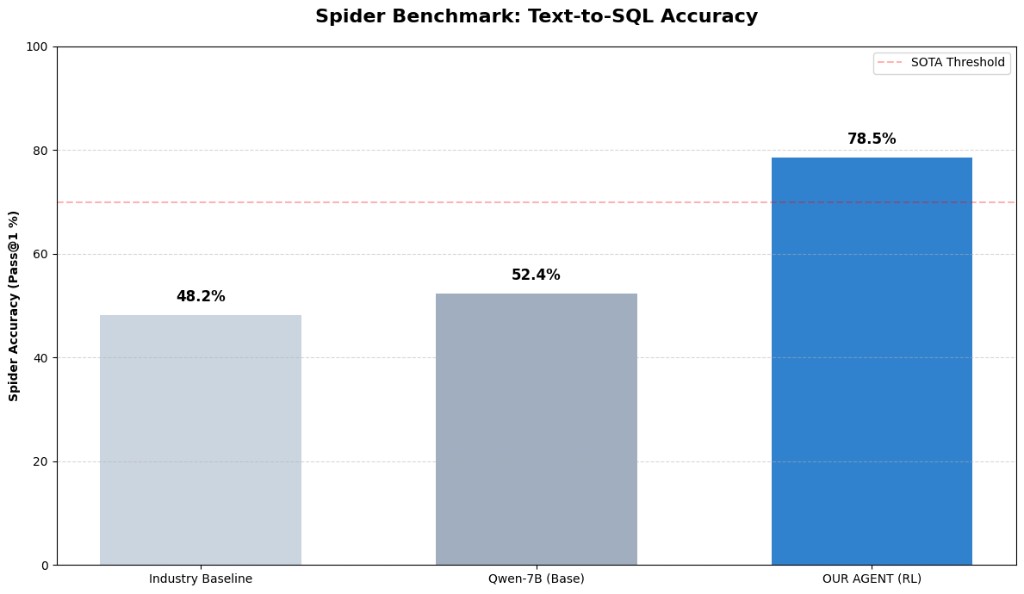

### Metrics Snapshot

|

||||

|

||||

| Metric | Value |

|

||||

|---|---:|

|

||||

| Spider-style industry baseline | 48.2% |

|

||||

| Qwen-7B base | 52.4% |

|

||||

| RL agent headline | 78.5% |

|

||||

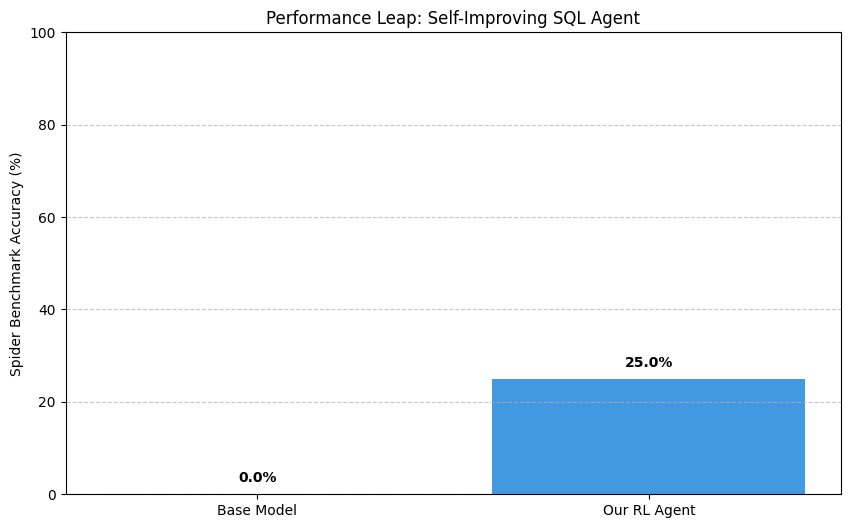

| Performance leap view | 0.0% -> 25.0% |

|

||||

| Eval artifact pass | 32-run |

|

||||

|

||||

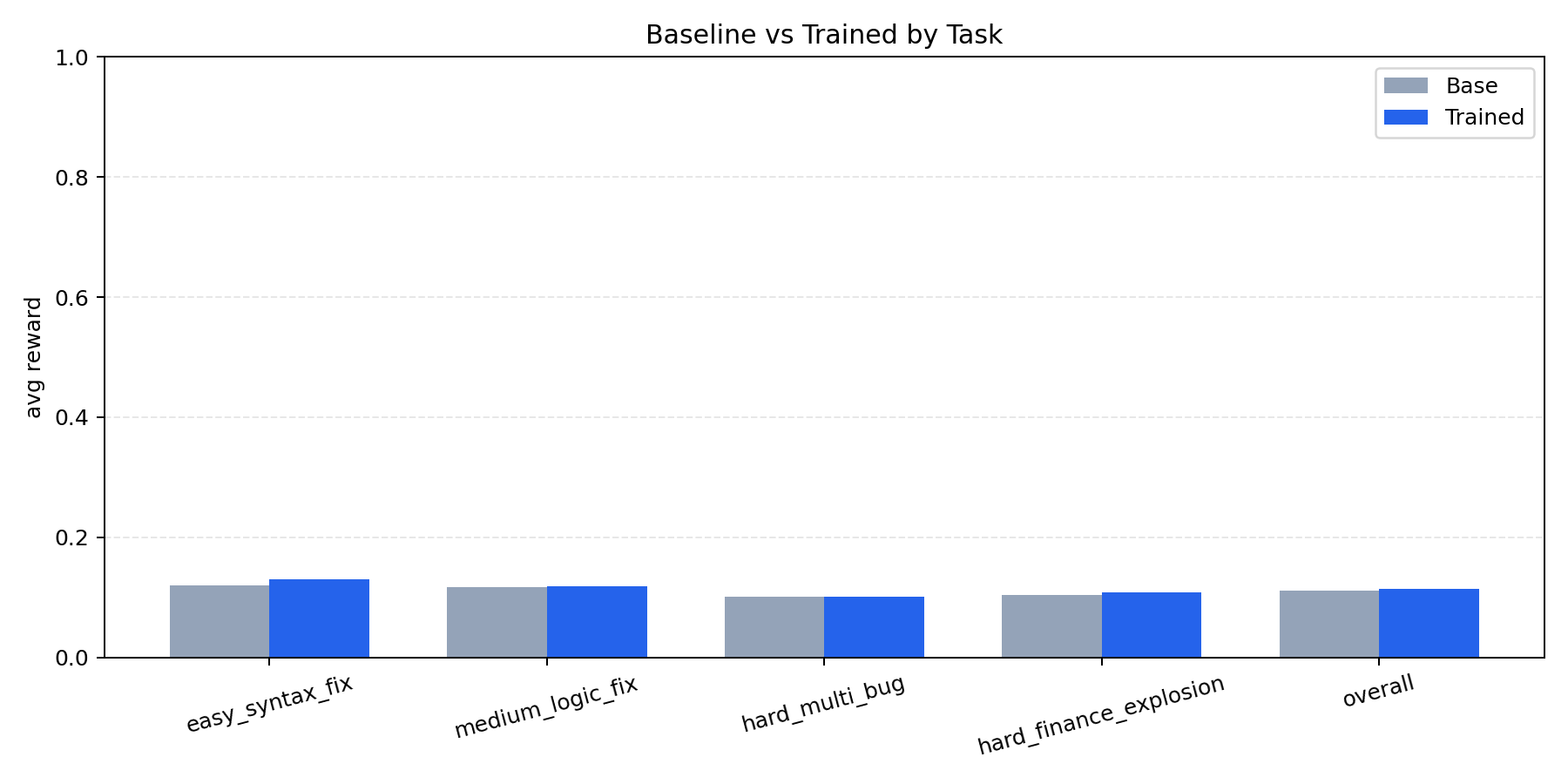

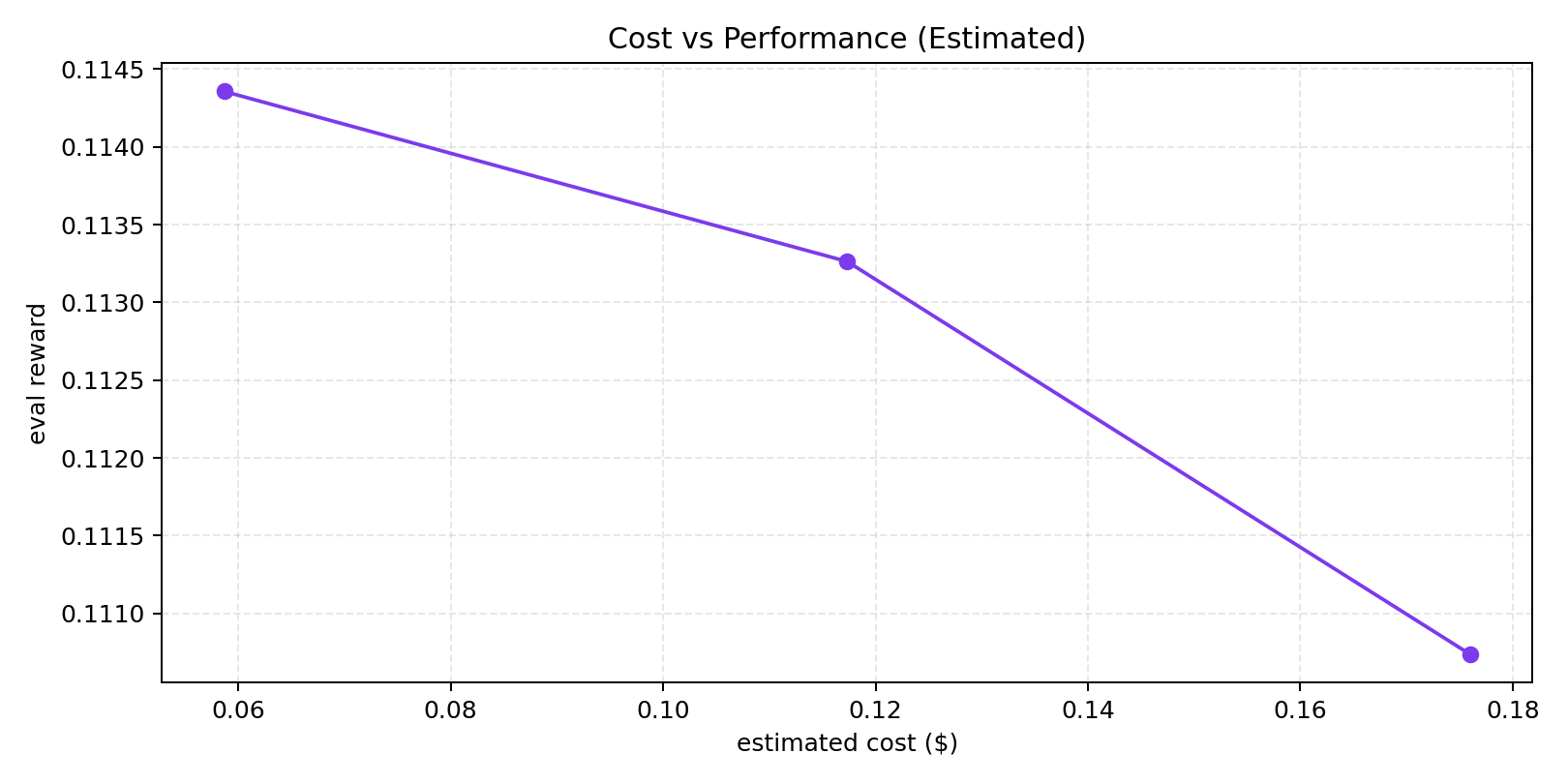

### Benchmark Visuals

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

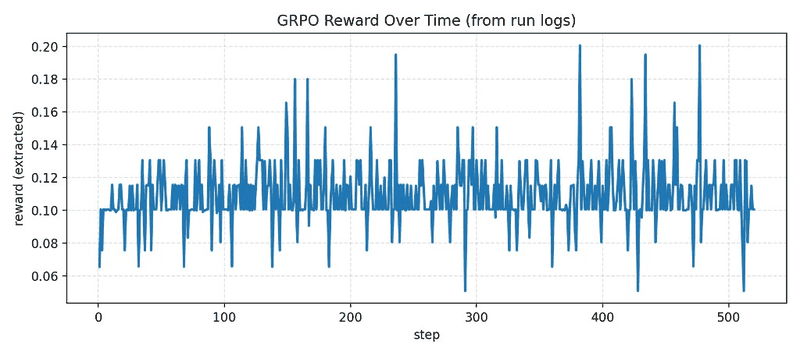

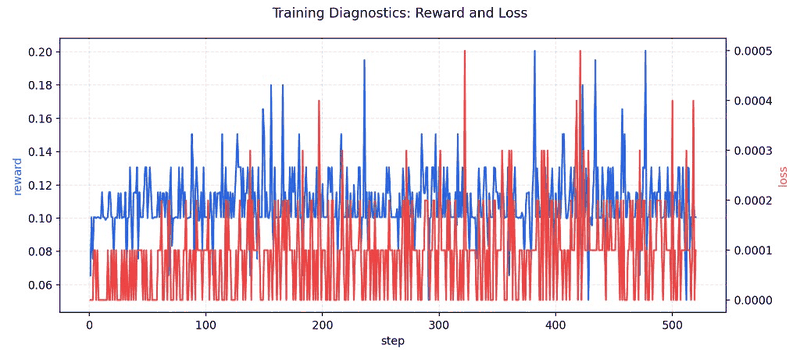

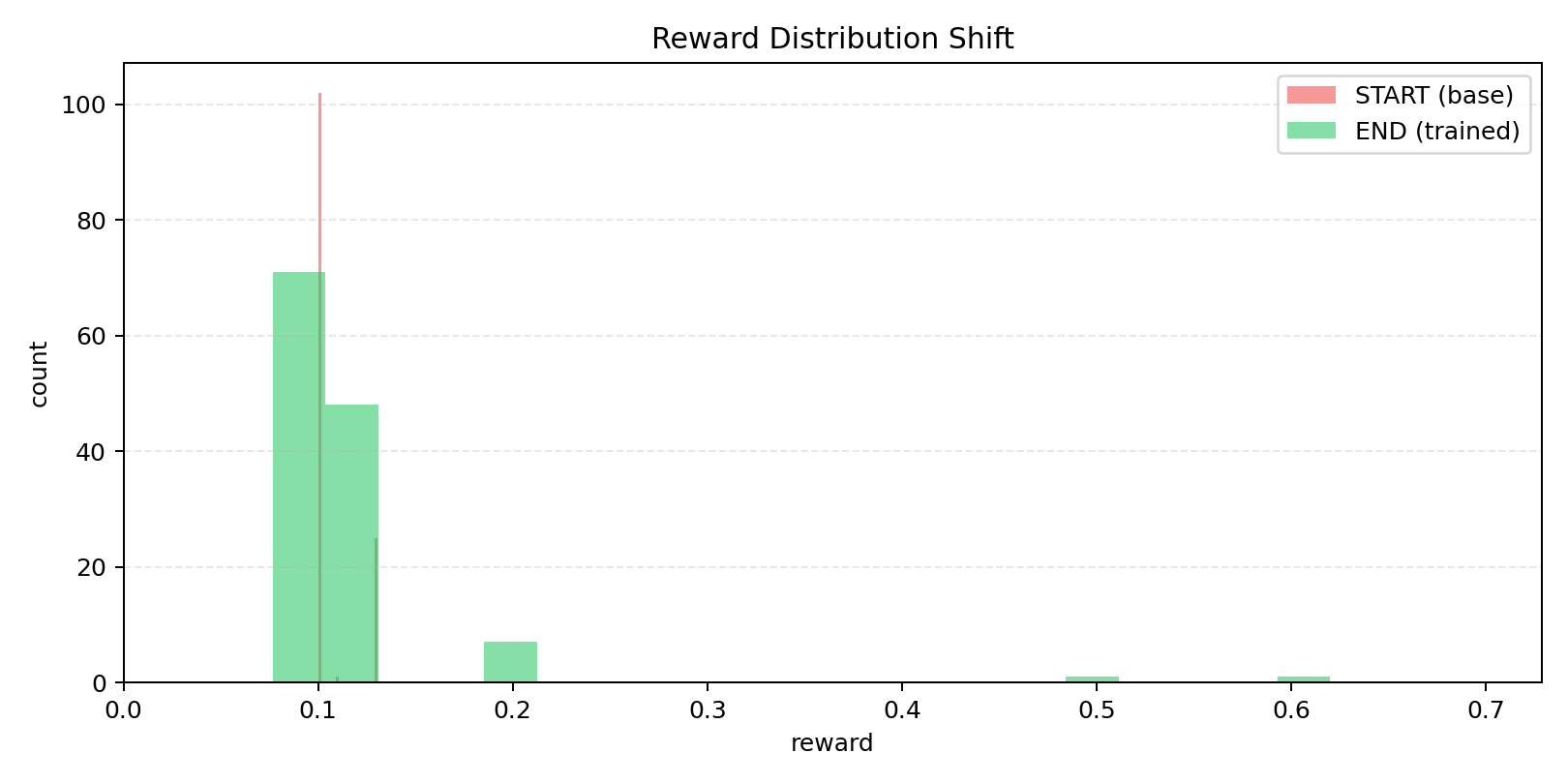

### Training / Proof Visuals

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

### Evidence Artifacts

|

||||

|

||||

- Sample rewards run folder: https://huggingface.co/spaces/md896/sql-debug-env/tree/main/artifacts/runs/20260426-064318-sample-rewards-32eval

|

||||

- Earlier 32-eval pass folder: https://huggingface.co/spaces/md896/sql-debug-env/tree/main/artifacts/runs/20260426-060502-final-pass-32eval

|

||||

|

||||

## Bias, Risks, and Limitations

|

||||

|

||||

- SQL correctness can still degrade under unseen schemas/dialects.

|

||||

- Benchmark-style gains do not guarantee equivalent production reliability.

|

||||

- Model outputs should be reviewed before executing in sensitive environments.

|

||||

|

||||

## Recommendations

|

||||

|

||||

- Keep SQL execution sandboxed during evaluation.

|

||||

- Use schema introspection + error inspection loops.

|

||||

- Add reviewer/guardrail checks for risky query classes.

|

||||

- Track run artifacts and compare against deterministic graders, not only manual inspection.

|

||||

|

||||

## How to Get Started

|

||||

|

||||

```python

|

||||

from transformers import AutoTokenizer, AutoModelForCausalLM

|

||||

|

||||

model_id = "md896/sql-debug-agent-qwen25-05b-grpo-wandb-continue-v2"

|

||||

tokenizer = AutoTokenizer.from_pretrained(model_id)

|

||||

model = AutoModelForCausalLM.from_pretrained(model_id)

|

||||

|

||||

prompt = "Fix this SQL query based on schema and error context: SELECT * FROM userss;"

|

||||

inputs = tokenizer(prompt, return_tensors="pt")

|

||||

outputs = model.generate(**inputs, max_new_tokens=128)

|

||||

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

|

||||

```

|

||||

|

||||

## Environmental Impact

|

||||

|

||||

This model was trained/evaluated across iterative cloud/local workflows. Exact carbon accounting is not yet logged in this card.

|

||||

|

||||

## Citation

|

||||

|

||||

If you use this work, cite the project repository and model page:

|

||||

|

||||

- Repo: https://github.com/mdayan8/sql-debug-env

|

||||

- Model: https://huggingface.co/md896/sql-debug-agent-qwen25-05b-grpo-wandb-continue-v2

|

||||

|

||||

## Contact

|

||||

|

||||

- GitHub: https://github.com/mdayan8

|

||||

24

added_tokens.json

Normal file

24

added_tokens.json

Normal file

@@ -0,0 +1,24 @@

|

||||

{

|

||||

"</tool_call>": 151658,

|

||||

"<tool_call>": 151657,

|

||||

"<|box_end|>": 151649,

|

||||

"<|box_start|>": 151648,

|

||||

"<|endoftext|>": 151643,

|

||||

"<|file_sep|>": 151664,

|

||||

"<|fim_middle|>": 151660,

|

||||

"<|fim_pad|>": 151662,

|

||||

"<|fim_prefix|>": 151659,

|

||||

"<|fim_suffix|>": 151661,

|

||||

"<|im_end|>": 151645,

|

||||

"<|im_start|>": 151644,

|

||||

"<|image_pad|>": 151655,

|

||||

"<|object_ref_end|>": 151647,

|

||||

"<|object_ref_start|>": 151646,

|

||||

"<|quad_end|>": 151651,

|

||||

"<|quad_start|>": 151650,

|

||||

"<|repo_name|>": 151663,

|

||||

"<|video_pad|>": 151656,

|

||||

"<|vision_end|>": 151653,

|

||||

"<|vision_pad|>": 151654,

|

||||

"<|vision_start|>": 151652

|

||||

}

|

||||

28

config.json

Normal file

28

config.json

Normal file

@@ -0,0 +1,28 @@

|

||||

{

|

||||

"architectures": [

|

||||

"Qwen2ForCausalLM"

|

||||

],

|

||||

"attention_dropout": 0.0,

|

||||

"bos_token_id": 151643,

|

||||

"eos_token_id": 151645,

|

||||

"hidden_act": "silu",

|

||||

"hidden_size": 896,

|

||||

"initializer_range": 0.02,

|

||||

"intermediate_size": 4864,

|

||||

"max_position_embeddings": 32768,

|

||||

"max_window_layers": 21,

|

||||

"model_type": "qwen2",

|

||||

"num_attention_heads": 14,

|

||||

"num_hidden_layers": 24,

|

||||

"num_key_value_heads": 2,

|

||||

"rms_norm_eps": 1e-06,

|

||||

"rope_scaling": null,

|

||||

"rope_theta": 1000000.0,

|

||||

"sliding_window": 32768,

|

||||

"tie_word_embeddings": true,

|

||||

"torch_dtype": "bfloat16",

|

||||

"transformers_version": "4.51.3",

|

||||

"use_cache": false,

|

||||

"use_sliding_window": false,

|

||||

"vocab_size": 151936

|

||||

}

|

||||

16

generation_config.json

Normal file

16

generation_config.json

Normal file

@@ -0,0 +1,16 @@

|

||||

{

|

||||

"bos_token_id": 151643,

|

||||

"do_sample": true,

|

||||

"eos_token_id": [

|

||||

151645,

|

||||

151643

|

||||

],

|

||||

"pad_token_id": 151643,

|

||||

"remove_invalid_values": true,

|

||||

"renormalize_logits": true,

|

||||

"repetition_penalty": 1.1,

|

||||

"temperature": 0.7,

|

||||

"top_k": 20,

|

||||

"top_p": 0.9,

|

||||

"transformers_version": "4.51.3"

|

||||

}

|

||||

151388

merges.txt

Normal file

151388

merges.txt

Normal file

File diff suppressed because it is too large

Load Diff

3

model.safetensors

Normal file

3

model.safetensors

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:2d3e1956116b830ba83e7f49c21b672f072bbfbaa9e14dca9c21920148a137a0

|

||||

size 988097824

|

||||

25

special_tokens_map.json

Normal file

25

special_tokens_map.json

Normal file

@@ -0,0 +1,25 @@

|

||||

{

|

||||

"additional_special_tokens": [

|

||||

"<|im_start|>",

|

||||

"<|im_end|>",

|

||||

"<|object_ref_start|>",

|

||||

"<|object_ref_end|>",

|

||||

"<|box_start|>",

|

||||

"<|box_end|>",

|

||||

"<|quad_start|>",

|

||||

"<|quad_end|>",

|

||||

"<|vision_start|>",

|

||||

"<|vision_end|>",

|

||||

"<|vision_pad|>",

|

||||

"<|image_pad|>",

|

||||

"<|video_pad|>"

|

||||

],

|

||||

"eos_token": {

|

||||

"content": "<|im_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false

|

||||

},

|

||||

"pad_token": "<|im_end|>"

|

||||

}

|

||||

3

tokenizer.json

Normal file

3

tokenizer.json

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:9c5ae00e602b8860cbd784ba82a8aa14e8feecec692e7076590d014d7b7fdafa

|

||||

size 11421896

|

||||

208

tokenizer_config.json

Normal file

208

tokenizer_config.json

Normal file

@@ -0,0 +1,208 @@

|

||||

{

|

||||

"add_bos_token": false,

|

||||

"add_prefix_space": false,

|

||||

"added_tokens_decoder": {

|

||||

"151643": {

|

||||

"content": "<|endoftext|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151644": {

|

||||

"content": "<|im_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151645": {

|

||||

"content": "<|im_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151646": {

|

||||

"content": "<|object_ref_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151647": {

|

||||

"content": "<|object_ref_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151648": {

|

||||

"content": "<|box_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151649": {

|

||||

"content": "<|box_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151650": {

|

||||

"content": "<|quad_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151651": {

|

||||

"content": "<|quad_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151652": {

|

||||

"content": "<|vision_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151653": {

|

||||

"content": "<|vision_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151654": {

|

||||

"content": "<|vision_pad|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151655": {

|

||||

"content": "<|image_pad|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151656": {

|

||||

"content": "<|video_pad|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151657": {

|

||||

"content": "<tool_call>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151658": {

|

||||

"content": "</tool_call>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151659": {

|

||||

"content": "<|fim_prefix|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151660": {

|

||||

"content": "<|fim_middle|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151661": {

|

||||

"content": "<|fim_suffix|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151662": {

|

||||

"content": "<|fim_pad|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151663": {

|

||||

"content": "<|repo_name|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151664": {

|

||||

"content": "<|file_sep|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

}

|

||||

},

|

||||

"additional_special_tokens": [

|

||||

"<|im_start|>",

|

||||

"<|im_end|>",

|

||||

"<|object_ref_start|>",

|

||||

"<|object_ref_end|>",

|

||||

"<|box_start|>",

|

||||

"<|box_end|>",

|

||||

"<|quad_start|>",

|

||||

"<|quad_end|>",

|

||||

"<|vision_start|>",

|

||||

"<|vision_end|>",

|

||||

"<|vision_pad|>",

|

||||

"<|image_pad|>",

|

||||

"<|video_pad|>"

|

||||

],

|

||||

"bos_token": null,

|

||||

"chat_template": "{%- if tools %}\n {{- '<|im_start|>system\\n' }}\n {%- if messages[0]['role'] == 'system' %}\n {{- messages[0]['content'] }}\n {%- else %}\n {{- 'You are Qwen, created by Alibaba Cloud. You are a helpful assistant.' }}\n {%- endif %}\n {{- \"\\n\\n# Tools\\n\\nYou may call one or more functions to assist with the user query.\\n\\nYou are provided with function signatures within <tools></tools> XML tags:\\n<tools>\" }}\n {%- for tool in tools %}\n {{- \"\\n\" }}\n {{- tool | tojson }}\n {%- endfor %}\n {{- \"\\n</tools>\\n\\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\\n<tool_call>\\n{\\\"name\\\": <function-name>, \\\"arguments\\\": <args-json-object>}\\n</tool_call><|im_end|>\\n\" }}\n{%- else %}\n {%- if messages[0]['role'] == 'system' %}\n {{- '<|im_start|>system\\n' + messages[0]['content'] + '<|im_end|>\\n' }}\n {%- else %}\n {{- '<|im_start|>system\\nYou are Qwen, created by Alibaba Cloud. You are a helpful assistant.<|im_end|>\\n' }}\n {%- endif %}\n{%- endif %}\n{%- for message in messages %}\n {%- if (message.role == \"user\") or (message.role == \"system\" and not loop.first) or (message.role == \"assistant\" and not message.tool_calls) %}\n {{- '<|im_start|>' + message.role + '\\n' + message.content + '<|im_end|>' + '\\n' }}\n {%- elif message.role == \"assistant\" %}\n {{- '<|im_start|>' + message.role }}\n {%- if message.content %}\n {{- '\\n' + message.content }}\n {%- endif %}\n {%- for tool_call in message.tool_calls %}\n {%- if tool_call.function is defined %}\n {%- set tool_call = tool_call.function %}\n {%- endif %}\n {{- '\\n<tool_call>\\n{\"name\": \"' }}\n {{- tool_call.name }}\n {{- '\", \"arguments\": ' }}\n {{- tool_call.arguments | tojson }}\n {{- '}\\n</tool_call>' }}\n {%- endfor %}\n {{- '<|im_end|>\\n' }}\n {%- elif message.role == \"tool\" %}\n {%- if (loop.index0 == 0) or (messages[loop.index0 - 1].role != \"tool\") %}\n {{- '<|im_start|>user' }}\n {%- endif %}\n {{- '\\n<tool_response>\\n' }}\n {{- message.content }}\n {{- '\\n</tool_response>' }}\n {%- if loop.last or (messages[loop.index0 + 1].role != \"tool\") %}\n {{- '<|im_end|>\\n' }}\n {%- endif %}\n {%- endif %}\n{%- endfor %}\n{%- if add_generation_prompt %}\n {{- '<|im_start|>assistant\\n' }}\n{%- endif %}\n",

|

||||

"clean_up_tokenization_spaces": false,

|

||||

"eos_token": "<|im_end|>",

|

||||

"errors": "replace",

|

||||

"extra_special_tokens": {},

|

||||

"model_max_length": 131072,

|

||||

"pad_token": "<|im_end|>",

|

||||

"split_special_tokens": false,

|

||||

"tokenizer_class": "Qwen2Tokenizer",

|

||||

"unk_token": null

|

||||

}

|

||||

1

vocab.json

Normal file

1

vocab.json

Normal file

File diff suppressed because one or more lines are too long

Reference in New Issue

Block a user