2.4 KiB

2.4 KiB

pipeline_tag, library_name, tags

| pipeline_tag | library_name | tags | |||||||

|---|---|---|---|---|---|---|---|---|---|

| text-generation | transformers |

|

combined_no_america_with_metadata_1b_step8k

Summary

This repo contains the leave out america 1b step8k model exported from the 8k checkpoint for the metadata localization project. It was trained from scratch on the project corpus, using the Llama 3.2 tokenizer and vocabulary.

Variant Metadata

- Stage:

pretrain - Family:

leave_one_out - Size:

1b - Metadata condition:

with_metadata - Checkpoint export:

8k - Base model lineage:

Trained from scratch; tokenizer/vocabulary from meta-llama/Llama-3.2-1B

Weights & Biases Provenance

- Run name:

20/12/2025_09:26:32_combined_no_america_with_metadata_1b - Internal run URL:

https://wandb.ai/iamshnoo/nanotron/runs/kane1qyw - Note: the Weights & Biases workspace is private; public readers should use the summarized metrics and configuration below.

- State:

finished - Runtime:

114h 52m 10s

Run Summary

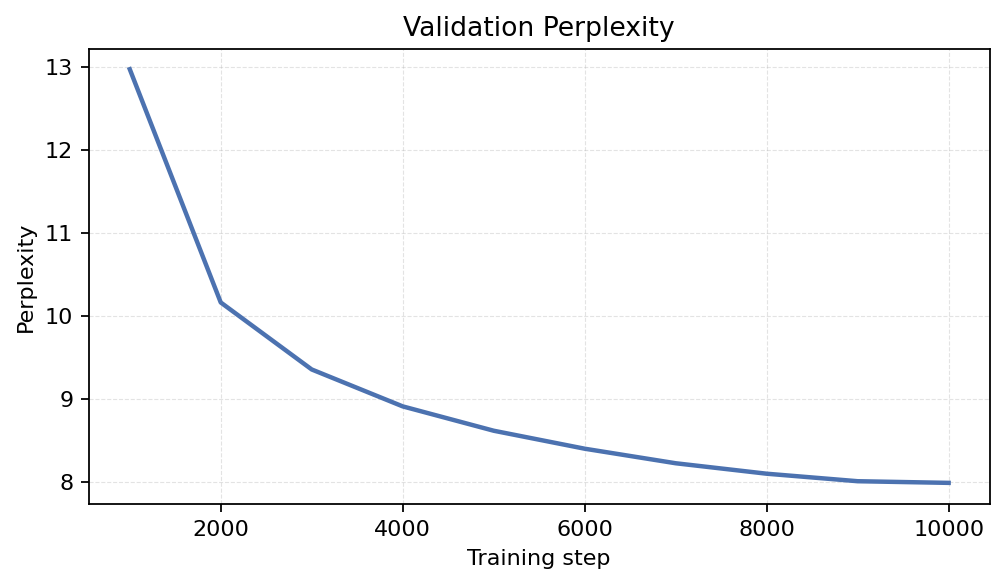

KPI/train_lm_loss:1.9947KPI/train_perplexity:7.3496KPI/val_loss:2.0776KPI/val_perplexity:7.9855KPI/consumed_tokens/train:41,943,040,000_step:10,000

Training Configuration

train_steps:10,000sequence_length:2,048micro_batch_size:8batch_accumulation_per_replica:64learning_rate:0.003min_decay_lr:0.0003checkpoint_interval:1,000

Training Curves

Static plots below were exported from the private Weights & Biases run and embedded here for public access.

Train Loss

Validation Perplexity

Throughput

Project Context

This model is part of the metadata localization release. Related checkpoints and variants are grouped in the public Hugging Face collection Metadata Conditioned LLMs.

- Training data source: News on the Web (NOW) Corpus

- Project repository: https://github.com/iamshnoo/metadata_localization

- Paper: https://arxiv.org/abs/2601.15236

Last synced: 2026-04-02 14:45:40 UTC