初始化项目,由ModelHub XC社区提供模型

Model: MediaTek-Research/Breeze-7B-32k-Instruct-v1_0 Source: Original Platform

This commit is contained in:

43

.gitattributes

vendored

Normal file

43

.gitattributes

vendored

Normal file

@@ -0,0 +1,43 @@

|

||||

*.7z filter=lfs diff=lfs merge=lfs -text

|

||||

*.arrow filter=lfs diff=lfs merge=lfs -text

|

||||

*.bin filter=lfs diff=lfs merge=lfs -text

|

||||

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

||||

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

||||

*.ftz filter=lfs diff=lfs merge=lfs -text

|

||||

*.gz filter=lfs diff=lfs merge=lfs -text

|

||||

*.h5 filter=lfs diff=lfs merge=lfs -text

|

||||

*.joblib filter=lfs diff=lfs merge=lfs -text

|

||||

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

||||

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

||||

*.model filter=lfs diff=lfs merge=lfs -text

|

||||

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

||||

*.npy filter=lfs diff=lfs merge=lfs -text

|

||||

*.npz filter=lfs diff=lfs merge=lfs -text

|

||||

*.onnx filter=lfs diff=lfs merge=lfs -text

|

||||

*.ot filter=lfs diff=lfs merge=lfs -text

|

||||

*.parquet filter=lfs diff=lfs merge=lfs -text

|

||||

*.pb filter=lfs diff=lfs merge=lfs -text

|

||||

*.pickle filter=lfs diff=lfs merge=lfs -text

|

||||

*.pkl filter=lfs diff=lfs merge=lfs -text

|

||||

*.pt filter=lfs diff=lfs merge=lfs -text

|

||||

*.pth filter=lfs diff=lfs merge=lfs -text

|

||||

*.rar filter=lfs diff=lfs merge=lfs -text

|

||||

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

||||

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

||||

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

||||

*.tar filter=lfs diff=lfs merge=lfs -text

|

||||

*.tflite filter=lfs diff=lfs merge=lfs -text

|

||||

*.tgz filter=lfs diff=lfs merge=lfs -text

|

||||

*.wasm filter=lfs diff=lfs merge=lfs -text

|

||||

*.xz filter=lfs diff=lfs merge=lfs -text

|

||||

*.zip filter=lfs diff=lfs merge=lfs -text

|

||||

*.zst filter=lfs diff=lfs merge=lfs -text

|

||||

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

||||

model-00001-of-00004.safetensors filter=lfs diff=lfs merge=lfs -text

|

||||

model-00002-of-00004.safetensors filter=lfs diff=lfs merge=lfs -text

|

||||

model-00003-of-00004.safetensors filter=lfs diff=lfs merge=lfs -text

|

||||

model-00004-of-00004.safetensors filter=lfs diff=lfs merge=lfs -text

|

||||

pytorch_model-00001-of-00004.bin filter=lfs diff=lfs merge=lfs -text

|

||||

pytorch_model-00002-of-00004.bin filter=lfs diff=lfs merge=lfs -text

|

||||

pytorch_model-00003-of-00004.bin filter=lfs diff=lfs merge=lfs -text

|

||||

pytorch_model-00004-of-00004.bin filter=lfs diff=lfs merge=lfs -text

|

||||

127

README.md

Normal file

127

README.md

Normal file

@@ -0,0 +1,127 @@

|

||||

---

|

||||

pipeline_tag: text-generation

|

||||

license: apache-2.0

|

||||

language:

|

||||

- zh

|

||||

- en

|

||||

---

|

||||

|

||||

# Model Card for MediaTek Research Breeze-7B-32k-Instruct-v1_0

|

||||

|

||||

MediaTek Research Breeze-7B (hereinafter referred to as Breeze-7B) is a language model family that builds on top of [Mistral-7B](https://huggingface.co/mistralai/Mistral-7B-v0.1), specifically intended for Traditional Chinese use.

|

||||

|

||||

[Breeze-7B-Base](https://huggingface.co/MediaTek-Research/Breeze-7B-Base-v1_0) is the base model for the Breeze-7B series.

|

||||

It is suitable for use if you have substantial fine-tuning data to tune it for your specific use case.

|

||||

|

||||

[Breeze-7B-Instruct](https://huggingface.co/MediaTek-Research/Breeze-7B-Instruct-v1_0) derives from the base model Breeze-7B-Base, making the resulting model amenable to be used as-is for commonly seen tasks.

|

||||

|

||||

[Breeze-7B-32k-Base](https://huggingface.co/MediaTek-Research/Breeze-7B-32k-Base-v1_0) is extended from the base model with more data, base change, and the disabling of the sliding window.

|

||||

Roughly speaking, that is equivalent to 44k Traditional Chinese characters.

|

||||

|

||||

[Breeze-7B-32k-Instruct](https://huggingface.co/MediaTek-Research/Breeze-7B-32k-Instruct-v1_0) derives from the base model Breeze-7B-32k-Base, making the resulting model amenable to be used as-is for commonly seen tasks.

|

||||

|

||||

|

||||

|

||||

Practicality-wise:

|

||||

- Breeze-7B-Base expands the original vocabulary with additional 30,000 Traditional Chinese tokens. With the expanded vocabulary, everything else being equal, Breeze-7B operates at twice the inference speed for Traditional Chinese to Mistral-7B and Llama 7B. [See [Inference Performance](#inference-performance).]

|

||||

- Breeze-7B-Instruct can be used as is for common tasks such as Q&A, RAG, multi-round chat, and summarization.

|

||||

- Breeze-7B-32k-Instruct can perform tasks at a document level (For Chinese, 20 ~ 40 pages).

|

||||

|

||||

*A project by the members (in alphabetical order): Chan-Jan Hsu 許湛然, Feng-Ting Liao 廖峰挺, Po-Chun Hsu 許博竣, Yi-Chang Chen 陳宜昌, and the supervisor Da-Shan Shiu 許大山.*

|

||||

|

||||

## Features

|

||||

|

||||

- Breeze-7B-32k-Base-v1_0

|

||||

- Expanding the vocabulary dictionary size from 32k to 62k to better support Traditional Chinese

|

||||

- 32k-token context length

|

||||

|

||||

- Breeze-7B-32k-Instruct-v1_0

|

||||

- Expanding the vocabulary dictionary size from 32k to 62k to better support Traditional Chinese

|

||||

- 32k-token context length

|

||||

- Multi-turn dialogue (without special handling for harmfulness)

|

||||

|

||||

## Model Details

|

||||

|

||||

- Breeze-7B-32k-Base-v1_0

|

||||

- Pretrained from: [Breeze-7B-Base](https://huggingface.co/MediaTek-Research/Breeze-7B-Base-v1_0)

|

||||

- Model type: Causal decoder-only transformer language model

|

||||

- Language: English and Traditional Chinese (zh-tw)

|

||||

- Breeze-7B-32k-Instruct-v1_0

|

||||

- Finetuned from: [Breeze-7B-32k-Base](https://huggingface.co/MediaTek-Research/Breeze-7B-32k-Base-v1_0)

|

||||

- Model type: Causal decoder-only transformer language model

|

||||

- Language: English and Traditional Chinese (zh-tw)

|

||||

|

||||

## Long-context Performance

|

||||

|

||||

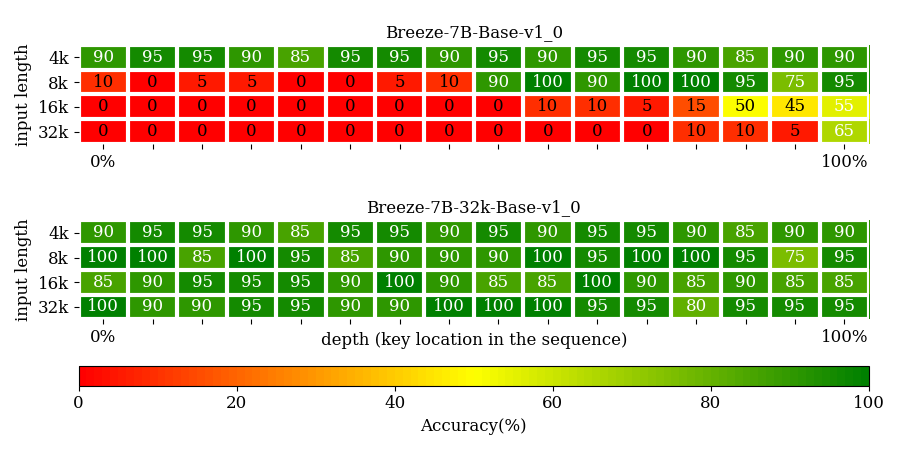

#### Needle-in-a-haystack Performance

|

||||

|

||||

We use the passkey retrieval task to test the model's ability to attend to different various depths in a given sequence.

|

||||

A key in placed within a long context distracting document for the model to retrieve.

|

||||

The key position is binned into 16 bins, and there are 20 testcases for each bin.

|

||||

Breeze-7B-32k-Base clears the tasks with 90+% accuracy, shown in the figure below.

|

||||

|

||||

|

||||

#### Long-DRCD Performance

|

||||

|

||||

| **Model/Performance(EM)** | **DRCD** | **DRCD-16k** | **DRCD-32k** |

|

||||

|---------------------------|----------|--------------|--------------|

|

||||

| **Breeze-7B-32k-Instruct-v1\_0** | 76.9 | 54.82 | 44.26 |

|

||||

| **Breeze-7B-32k-Base-v1\_0** | 79.73 | 69.68 | 61.55 |

|

||||

| **Breeze-7B-Base-v1\_0** | 80.61 | 21.79 | 15.29 |

|

||||

|

||||

#### Short-Benchmark Performance

|

||||

|

||||

| **Model/Performance(EM)** | **TMMLU+** | **MMLU** | **TABLE** | **MT-Bench-tw** | **MT-Bench** |

|

||||

|---------------------------|----------|--------------|--------------|-----|-----|

|

||||

| **Breeze-7B-32k-Instruct-v1\_0** | 41.37 | 61.34 | 34 | 5.8 | 7.4 |

|

||||

| **Breeze-7B-Instruct-v1\_0** | 42.67 | 62.73 | 39.58 | 6.0 | 7.4 |

|

||||

|

||||

## Use in Transformers

|

||||

|

||||

First, install direct dependencies:

|

||||

```

|

||||

pip install transformers torch accelerate

|

||||

```

|

||||

<p style="color:red;">Flash-attention2 is strongly recommended for long context scenarios.</p>

|

||||

|

||||

```bash

|

||||

pip install packaging ninja

|

||||

pip install flash-attn

|

||||

```

|

||||

Then load the model in transformers:

|

||||

```python

|

||||

>>> from transformers import AutoModelForCausalLM, AutoTokenizer

|

||||

>>> tokenizer = AutoTokenizer.from_pretrained("MediaTek-Research/Breeze-7B-32k-Instruct-v1_0/")

|

||||

>>> model = AutoModelForCausalLM.from_pretrained(

|

||||

>>> "MediaTek-Research/Breeze-7B-32k-Instruct-v1_0",

|

||||

... device_map="auto",

|

||||

... torch_dtype=torch.bfloat16,

|

||||

... attn_implementation="flash_attention_2"

|

||||

... )

|

||||

>>> chat = [

|

||||

... {"role": "user", "content": "你好,請問你可以完成什麼任務?"},

|

||||

... {"role": "assistant", "content": "你好,我可以幫助您解決各種問題、提供資訊和協助您完成許多不同的任務。例如:回答技術問題、提供建議、翻譯文字、尋找資料或協助您安排行程等。請告訴我如何能幫助您。"},

|

||||

... {"role": "user", "content": "太棒了!"},

|

||||

... ]

|

||||

>>> tokenizer.apply_chat_template(chat, tokenize=False)

|

||||

"<s>You are a helpful AI assistant built by MediaTek Research. The user you are helping speaks Traditional Chinese and comes from Taiwan. [INST] 你好,請問你可以完成什麼任務? [/INST] 你好,我可以幫助您解決各種問題、提供資訊和協助您完成許多不同的任務。例如:回答技術問題、提供建議、翻譯文字、尋找資料或協助您安排行程等。請告訴我如何能幫助您。 [INST] 太棒了! [/INST] "

|

||||

# Tokenized results

|

||||

# ['▁', '你好', ',', '請問', '你', '可以', '完成', '什麼', '任務', '?']

|

||||

# ['▁', '你好', ',', '我', '可以', '幫助', '您', '解決', '各種', '問題', '、', '提供', '資訊', '和', '協助', '您', '完成', '許多', '不同', '的', '任務', '。', '例如', ':', '回答', '技術', '問題', '、', '提供', '建議', '、', '翻譯', '文字', '、', '尋找', '資料', '或', '協助', '您', '安排', '行程', '等', '。', '請', '告訴', '我', '如何', '能', '幫助', '您', '。']

|

||||

# ['▁', '太', '棒', '了', '!']

|

||||

```

|

||||

|

||||

|

||||

|

||||

## Citation

|

||||

|

||||

```

|

||||

@article{MediaTek-Research2024breeze7b,

|

||||

title={Breeze-7B Technical Report},

|

||||

author={Chan-Jan Hsu and Chang-Le Liu and Feng-Ting Liao and Po-Chun Hsu and Yi-Chang Chen and Da-Shan Shiu},

|

||||

year={2024},

|

||||

eprint={2403.02712},

|

||||

archivePrefix={arXiv},

|

||||

primaryClass={cs.CL}

|

||||

}

|

||||

```

|

||||

4

added_tokens.json

Normal file

4

added_tokens.json

Normal file

@@ -0,0 +1,4 @@

|

||||

{

|

||||

"<EOD>": 61873,

|

||||

"<PAD>": 61874

|

||||

}

|

||||

28

config.json

Normal file

28

config.json

Normal file

@@ -0,0 +1,28 @@

|

||||

{

|

||||

"_name_or_path": "/nobackup/mtklmadm_t2/mtk53570/Breeze-650G-32k-step1500-hf/",

|

||||

"architectures": [

|

||||

"MistralForCausalLM"

|

||||

],

|

||||

"attention_dropout": 0.0,

|

||||

"bos_token_id": 1,

|

||||

"eos_token_id": 2,

|

||||

"hidden_act": "silu",

|

||||

"hidden_size": 4096,

|

||||

"initializer_range": 0.02,

|

||||

"intermediate_size": 14336,

|

||||

"max_position_embeddings": 32768,

|

||||

"model_type": "mistral",

|

||||

"num_attention_heads": 32,

|

||||

"num_hidden_layers": 32,

|

||||

"num_key_value_heads": 8,

|

||||

"output_router_logits": true,

|

||||

"pretraining_tp": 1,

|

||||

"rms_norm_eps": 1e-05,

|

||||

"rope_theta": 1000000.0,

|

||||

"sliding_window": 65536,

|

||||

"tie_word_embeddings": false,

|

||||

"torch_dtype": "bfloat16",

|

||||

"transformers_version": "4.38.0",

|

||||

"use_cache": false,

|

||||

"vocab_size": 62464

|

||||

}

|

||||

1

configuration.json

Normal file

1

configuration.json

Normal file

@@ -0,0 +1 @@

|

||||

{"framework": "pytorch", "task": "text-generation", "allow_remote": true}

|

||||

6

generation_config.json

Normal file

6

generation_config.json

Normal file

@@ -0,0 +1,6 @@

|

||||

{

|

||||

"_from_model_config": true,

|

||||

"bos_token_id": 1,

|

||||

"eos_token_id": 2,

|

||||

"transformers_version": "4.38.0"

|

||||

}

|

||||

3

model-00001-of-00004.safetensors

Normal file

3

model-00001-of-00004.safetensors

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:0aa500221b50e2cd5df4e8b9ee7741ec6b0e972243e864981d0712e7d6bf0db3

|

||||

size 4957842176

|

||||

3

model-00002-of-00004.safetensors

Normal file

3

model-00002-of-00004.safetensors

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:dc960c95aecd795f00eec175e559a8e1a2fcb27bd0269c5062ad328fe115b31b

|

||||

size 4915916184

|

||||

3

model-00003-of-00004.safetensors

Normal file

3

model-00003-of-00004.safetensors

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:42b50a00674895fab8f094c81ea6da8ac86377dd945f5eb9a55a942e9a6e7156

|

||||

size 4597156656

|

||||

3

model-00004-of-00004.safetensors

Normal file

3

model-00004-of-00004.safetensors

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:7630057b34a628c0b69981e0ae46698fa9edac53e9f06af106b47c78caf07545

|

||||

size 511705216

|

||||

298

model.safetensors.index.json

Normal file

298

model.safetensors.index.json

Normal file

@@ -0,0 +1,298 @@

|

||||

{

|

||||

"metadata": {

|

||||

"total_size": 14982586368

|

||||

},

|

||||

"weight_map": {

|

||||

"lm_head.weight": "model-00004-of-00004.safetensors",

|

||||

"model.embed_tokens.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.10.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.11.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.15.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.15.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.15.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.15.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.15.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.16.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.16.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.16.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.16.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.16.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.17.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.17.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.17.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.17.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.17.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.18.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.18.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.18.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.18.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.18.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.19.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.19.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.19.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.19.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.19.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.2.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.20.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.20.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.20.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.20.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.20.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.21.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.21.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.22.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.23.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.23.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.23.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.23.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.23.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.24.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.24.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.24.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.24.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.24.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.25.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.25.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.25.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.25.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.25.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.26.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.26.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.26.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.26.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.26.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.27.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.27.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.27.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.27.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.27.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.28.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.28.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.28.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.28.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.28.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.28.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.28.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.28.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.28.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.29.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.29.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.29.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.29.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.29.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.29.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.29.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.29.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.29.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.3.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.30.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.30.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.30.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.30.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.30.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.30.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.30.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.30.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.30.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.31.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.31.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.31.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.31.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.31.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.31.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.31.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.31.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.31.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.4.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.7.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.7.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.7.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.7.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.7.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.8.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.8.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.8.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.8.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.8.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.9.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.9.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.9.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.9.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.9.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.norm.weight": "model-00003-of-00004.safetensors"

|

||||

}

|

||||

}

|

||||

3

pytorch_model-00001-of-00004.bin

Normal file

3

pytorch_model-00001-of-00004.bin

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:5c87bb14462af203998b7361557468dae65c0e9183889a2d0c5e071640b60b47

|

||||

size 4957863760

|

||||

3

pytorch_model-00002-of-00004.bin

Normal file

3

pytorch_model-00002-of-00004.bin

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:7cd665443d8ed1140e4063ece371e74b5798d8a9cae66f4c43432b061dd155a5

|

||||

size 4915938762

|

||||

3

pytorch_model-00003-of-00004.bin

Normal file

3

pytorch_model-00003-of-00004.bin

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:4817dbf27429f9eae089e988312e0f0278bf150cc2c23aee18dfc6e63048bfac

|

||||

size 4597178100

|

||||

3

pytorch_model-00004-of-00004.bin

Normal file

3

pytorch_model-00004-of-00004.bin

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:4af18a0bbbc6acb48247bddb56b3a9b0222adecd68969feb1ea4d81b86fe22e6

|

||||

size 511706501

|

||||

298

pytorch_model.bin.index.json

Normal file

298

pytorch_model.bin.index.json

Normal file

@@ -0,0 +1,298 @@

|

||||

{

|

||||

"metadata": {

|

||||

"total_size": 14982586368

|

||||

},

|

||||

"weight_map": {

|

||||

"lm_head.weight": "pytorch_model-00004-of-00004.bin",

|

||||

"model.embed_tokens.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.0.input_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.0.mlp.down_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.0.mlp.gate_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.0.mlp.up_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.0.post_attention_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.0.self_attn.k_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.0.self_attn.o_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.0.self_attn.q_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.0.self_attn.v_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.1.input_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.1.mlp.down_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.1.mlp.gate_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.1.mlp.up_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.1.post_attention_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.1.self_attn.k_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.1.self_attn.o_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.1.self_attn.q_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.1.self_attn.v_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.10.input_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.10.mlp.down_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.10.mlp.gate_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.10.mlp.up_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.10.post_attention_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.10.self_attn.k_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.10.self_attn.o_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.10.self_attn.q_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.10.self_attn.v_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.11.input_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.11.mlp.down_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.11.mlp.gate_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.11.mlp.up_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.11.post_attention_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.11.self_attn.k_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.11.self_attn.o_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.11.self_attn.q_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.11.self_attn.v_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.12.input_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.12.mlp.down_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.12.mlp.gate_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.12.mlp.up_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.12.post_attention_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.12.self_attn.k_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.12.self_attn.o_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.12.self_attn.q_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.12.self_attn.v_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.13.input_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.13.mlp.down_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.13.mlp.gate_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.13.mlp.up_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.13.post_attention_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.13.self_attn.k_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.13.self_attn.o_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.13.self_attn.q_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.13.self_attn.v_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.14.input_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.14.mlp.down_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.14.mlp.gate_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.14.mlp.up_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.14.post_attention_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.14.self_attn.k_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.14.self_attn.o_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.14.self_attn.q_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.14.self_attn.v_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.15.input_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.15.mlp.down_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.15.mlp.gate_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.15.mlp.up_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.15.post_attention_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.15.self_attn.k_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.15.self_attn.o_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.15.self_attn.q_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.15.self_attn.v_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.16.input_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.16.mlp.down_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.16.mlp.gate_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.16.mlp.up_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.16.post_attention_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.16.self_attn.k_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.16.self_attn.o_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.16.self_attn.q_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.16.self_attn.v_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.17.input_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.17.mlp.down_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.17.mlp.gate_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.17.mlp.up_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.17.post_attention_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.17.self_attn.k_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.17.self_attn.o_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.17.self_attn.q_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.17.self_attn.v_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.18.input_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.18.mlp.down_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.18.mlp.gate_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.18.mlp.up_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.18.post_attention_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.18.self_attn.k_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.18.self_attn.o_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.18.self_attn.q_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.18.self_attn.v_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.19.input_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.19.mlp.down_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.19.mlp.gate_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.19.mlp.up_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.19.post_attention_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.19.self_attn.k_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.19.self_attn.o_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.19.self_attn.q_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.19.self_attn.v_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.2.input_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.2.mlp.down_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.2.mlp.gate_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.2.mlp.up_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.2.post_attention_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.2.self_attn.k_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.2.self_attn.o_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.2.self_attn.q_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.2.self_attn.v_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.20.input_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.20.mlp.down_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.20.mlp.gate_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.20.mlp.up_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.20.post_attention_layernorm.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.20.self_attn.k_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.20.self_attn.o_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.20.self_attn.q_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.20.self_attn.v_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.21.input_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.21.mlp.down_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.21.mlp.gate_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.21.mlp.up_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.21.post_attention_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.21.self_attn.k_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.21.self_attn.o_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.21.self_attn.q_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.21.self_attn.v_proj.weight": "pytorch_model-00002-of-00004.bin",

|

||||

"model.layers.22.input_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.22.mlp.down_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.22.mlp.gate_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.22.mlp.up_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.22.post_attention_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.22.self_attn.k_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.22.self_attn.o_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.22.self_attn.q_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.22.self_attn.v_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.23.input_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.23.mlp.down_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.23.mlp.gate_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.23.mlp.up_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.23.post_attention_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.23.self_attn.k_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.23.self_attn.o_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.23.self_attn.q_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.23.self_attn.v_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.24.input_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.24.mlp.down_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.24.mlp.gate_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.24.mlp.up_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.24.post_attention_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.24.self_attn.k_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.24.self_attn.o_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.24.self_attn.q_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.24.self_attn.v_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.25.input_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.25.mlp.down_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.25.mlp.gate_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.25.mlp.up_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.25.post_attention_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.25.self_attn.k_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.25.self_attn.o_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.25.self_attn.q_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.25.self_attn.v_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.26.input_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.26.mlp.down_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.26.mlp.gate_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.26.mlp.up_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.26.post_attention_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.26.self_attn.k_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.26.self_attn.o_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.26.self_attn.q_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.26.self_attn.v_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.27.input_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.27.mlp.down_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.27.mlp.gate_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.27.mlp.up_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.27.post_attention_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.27.self_attn.k_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.27.self_attn.o_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.27.self_attn.q_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.27.self_attn.v_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.28.input_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.28.mlp.down_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.28.mlp.gate_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.28.mlp.up_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.28.post_attention_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.28.self_attn.k_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.28.self_attn.o_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.28.self_attn.q_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.28.self_attn.v_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.29.input_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.29.mlp.down_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.29.mlp.gate_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.29.mlp.up_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.29.post_attention_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.29.self_attn.k_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.29.self_attn.o_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.29.self_attn.q_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.29.self_attn.v_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.3.input_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.3.mlp.down_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.3.mlp.gate_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.3.mlp.up_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.3.post_attention_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.3.self_attn.k_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.3.self_attn.o_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.3.self_attn.q_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.3.self_attn.v_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.30.input_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.30.mlp.down_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.30.mlp.gate_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.30.mlp.up_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.30.post_attention_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.30.self_attn.k_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.30.self_attn.o_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.30.self_attn.q_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.30.self_attn.v_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.31.input_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.31.mlp.down_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.31.mlp.gate_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.31.mlp.up_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.31.post_attention_layernorm.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.31.self_attn.k_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.31.self_attn.o_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.31.self_attn.q_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.31.self_attn.v_proj.weight": "pytorch_model-00003-of-00004.bin",

|

||||

"model.layers.4.input_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.4.mlp.down_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.4.mlp.gate_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.4.mlp.up_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.4.post_attention_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.4.self_attn.k_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.4.self_attn.o_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.4.self_attn.q_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.4.self_attn.v_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.5.input_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.5.mlp.down_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.5.mlp.gate_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.5.mlp.up_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.5.post_attention_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.5.self_attn.k_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.5.self_attn.o_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.5.self_attn.q_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.5.self_attn.v_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.6.input_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.6.mlp.down_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.6.mlp.gate_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.6.mlp.up_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.6.post_attention_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.6.self_attn.k_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.6.self_attn.o_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.6.self_attn.q_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.6.self_attn.v_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.7.input_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.7.mlp.down_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.7.mlp.gate_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.7.mlp.up_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.7.post_attention_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.7.self_attn.k_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.7.self_attn.o_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.7.self_attn.q_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.7.self_attn.v_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.8.input_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.8.mlp.down_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.8.mlp.gate_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.8.mlp.up_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.8.post_attention_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.8.self_attn.k_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.8.self_attn.o_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.8.self_attn.q_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.8.self_attn.v_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.9.input_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.9.mlp.down_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.9.mlp.gate_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.9.mlp.up_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.9.post_attention_layernorm.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.9.self_attn.k_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||

"model.layers.9.self_attn.o_proj.weight": "pytorch_model-00001-of-00004.bin",

|

||||