Documentation | Users Forum | slack |

Latest News🔥

- [2025/11]

- [2025/11]

- [2025/11]

- [2025/11] Initial release of vLLM Kunlun

Overview

vLLM Kunlun (vllm-kunlun) is a community-maintained hardware plugin designed to seamlessly run vLLM on the Kunlun XPU. It is the recommended approach for integrating the Kunlun backend within the vLLM community, adhering to the principles outlined in the [RFC]: Hardware pluggable. This plugin provides a hardware-pluggable interface that decouples the integration of the Kunlun XPU with vLLM.

By utilizing the vLLM Kunlun plugin, popular open-source models, including Transformer-like, Mixture-of-Expert, Embedding, and Multi-modal LLMs, can run effortlessly on the Kunlun XPU.

Prerequisites

- Hardware: Kunlun3 P800

- OS: Ubuntu 22.04

- Software:

- Python >=3.10

- PyTorch ≥ 2.5.1

- vLLM (same version as vllm-kunlun)

Supported Models

<style> table { width: 100%; border-collapse: collapse; background: white; margin: 20px 0; box-shadow: 0 2px 8px rgba(0, 0, 0, 0.08); border-radius: 8px; overflow: hidden; } th { background: linear-gradient(135deg, #0E7DC6 0%, #0A5BA8 100%); color: white; padding: 14px 12px; text-align: left; font-weight: 600; font-size: 13px; letter-spacing: 0.5px; border: none; } td { padding: 12px; border-bottom: 1px solid #e8e8e8; font-size: 13px; color: #333; } tr:last-child td { border-bottom: none; } tbody tr { transition: background-color 0.2s ease; } tbody tr:hover { background-color: #f5faff; } tbody tr:nth-child(even) { background-color: #fafbfc; } tbody tr:nth-child(even):hover { background-color: #f0f7fc; } .status-support { color: #22863a; font-weight: 600; font-size: 14px; } .status-progress { color: #f6a909; font-weight: 600; font-size: 14px; } .status-coming { color: #999; font-size: 12px; background-color: #f5f5f5; padding: 2px 6px; border-radius: 3px; display: inline-block; } .model-name { font-weight: 500; color: #1e40af; } h3 { color: #1e40af; font-size: 16px; margin-top: 30px; margin-bottom: 15px; font-weight: 600; } h3:first-of-type { margin-top: 0; } </style>Generaltive Models

| Model | Support | Quantization | LoRA | Piecewise Kunlun Graph | Note |

|---|---|---|---|---|---|

| Qwen2/2.5 | ✅ | ✅ | ✅ | ||

| Qwen3 | ✅ | ✅ | ✅ | ||

| Qwen3-Moe/Coder | ✅ | ✅ | ✅ | ✅ | |

| QwQ-32B | ✅ | ✅ | |||

| LLama2/3/3.1 | ✅ | ✅ | |||

| GLM-4.5/Air | ✅ | ✅ | ✅ | ✅ | |

| Qwen3next | ⚠️ | comming soon | |||

| Gpt oss | ⚠️ | comming soon | |||

| Deepseek v3/3.2 | ⚠️ | comming soon |

Multimodal Language Models

| Model | Support | Quantization | LoRA | Piecewise Kunlun Graph | Note |

|---|---|---|---|---|---|

| Qianfan-VL | ✅ | ✅ | |||

| Qwen2.5VL | ✅ | ✅ | |||

| InternVL2.5/3/3.5 | ✅ | ✅ | |||

| InternVL3.5 | ✅ | ✅ | |||

| InternS1 | ✅ | ✅ | |||

| Qwen2.5 omini | ⚠️ | comming soon | |||

| Qwen3vl | ⚠️ | comming soon |

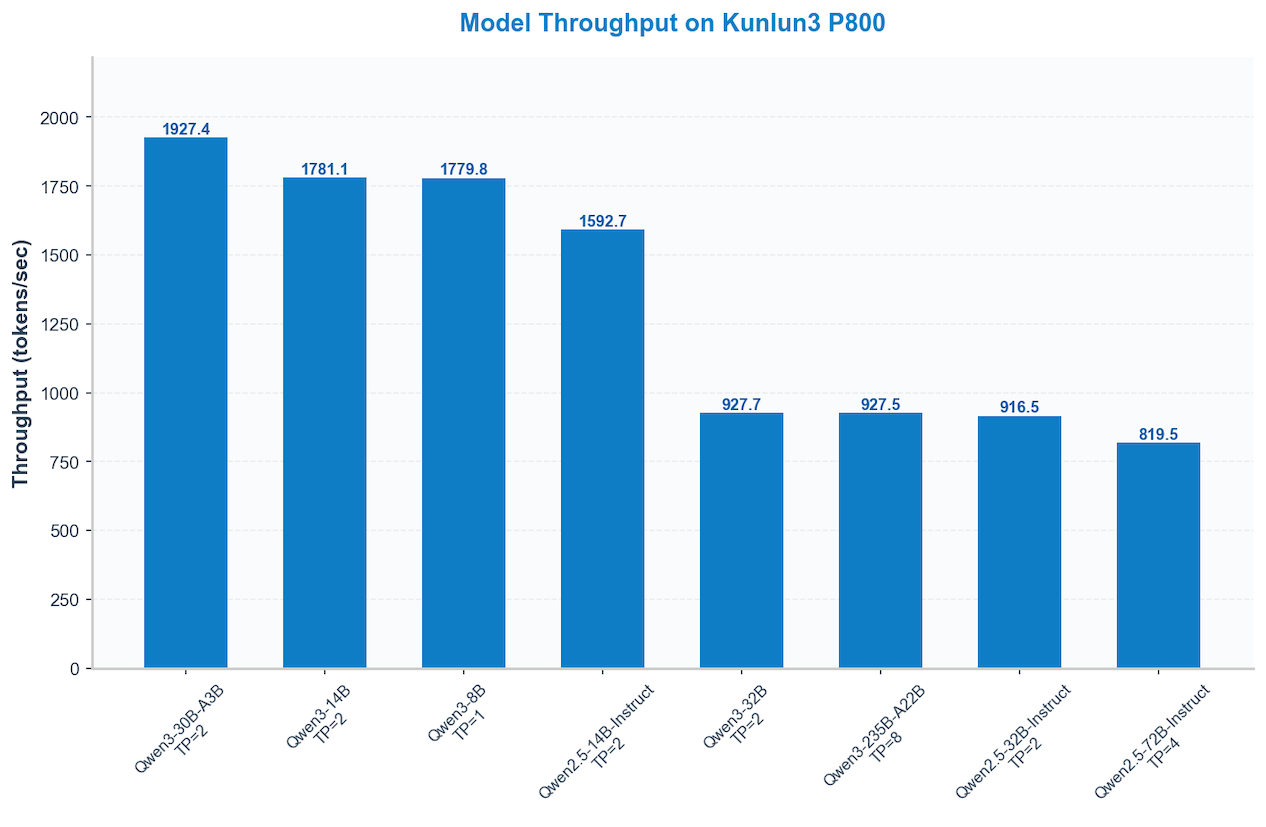

Performance Visualization 🚀

High-performance computing at work: How different models perform on the Kunlun3 P800.

Current environment: 16-way concurrency, input/output size 2048.

Getting Started

Please use the following recommended versions to get started quickly:

| Version | Release type | Doc |

|---|---|---|

| v0.10.1.1 | Latest stable version | QuickStart and Installation for more details |

Contributing

See CONTRIBUTING for more details, which is a step-by-step guide to help you set up the development environment, build, and test.

We welcome and value any contributions and collaborations:

- Open an Issue if you find a bug or have a feature request

License

Apache License 2.0, as found in the LICENSE file.