### What this PR does / why we need it?

Add local running multi-node nightly test case guide, help running

locally at developer env.

### Does this PR introduce _any_ user-facing change?

NA

### How was this patch tested?

Test with local running multi-node test.

Using this document can successfully start multi-node night e2e in

locall

- vLLM version: v0.12.0

- vLLM main:

ad32e3e19c

---------

Signed-off-by: leo-pony <nengjunma@outlook.com>

6.9 KiB

Multi Node Test

Multi-Node CI is designed to test distributed scenarios of very large models, eg: disaggregated_prefill multi DP across multi nodes and so on.

How is works

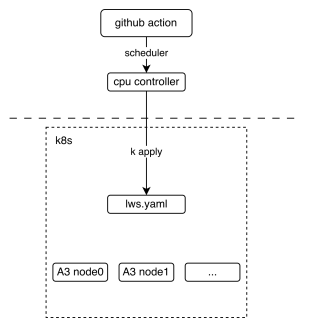

The following picture shows the basic deployment view of the multi-node CI mechanism, It shows how the github action interact with lws (a kind of kubernetes crd resource)

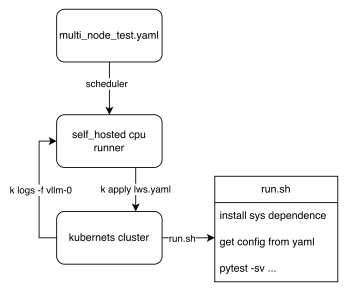

From the workflow perspective, we can see how the final test script is executed, The key point is that these two lws.yaml and run.sh, The former defines how our k8s cluster is pulled up, and the latter defines the entry script when the pod is started, Each node executes different logic according to the LWS_WORKER_INDEX environment variable, so that multiple nodes can form a distributed cluster to perform tasks.

How to contribute

-

Upload custom weights

If you need customized weights, for example, you quantized a w8a8 weight for DeepSeek-V3 and you want your weight to run on CI, Uploading weights to ModelScope's vllm-ascend organization is welcome, If you do not have permission to upload, please contact @Potabk

-

Add config yaml

As the entrypoint script run.sh shows, A k8s pod startup means traversing all *.yaml files in the directory, reading and executing according to different configurations, so what we need to do is just add "yamls" like DeepSeek-V3.yaml.

Suppose you have 2 nodes running a 1P1D setup (1 Prefillers + 1 Decoder):

you may add a config file looks like:

test_name: "test DeepSeek-V3 disaggregated_prefill" # the model being tested model: "vllm-ascend/DeepSeek-V3-W8A8" # how large the cluster is num_nodes: 2 npu_per_node: 16 # All env vars you need should add it here env_common: VLLM_USE_MODELSCOPE: true OMP_PROC_BIND: false OMP_NUM_THREADS: 100 HCCL_BUFFSIZE: 1024 SERVER_PORT: 8080 disaggregated_prefill: enabled: true # node index(a list) which meet all the conditions: # - prefiller # - no headless(have api server) prefiller_host_index: [0] # node index(a list) which meet all the conditions: # - decoder # - no headless(have api server) decoder_host_index: [1] # Add each node's vllm serve cli command just like you run locally deployment: - server_cmd: > vllm serve ... - server_cmd: > vllm serve ... benchmarks: perf: # fill with performance test kwargs acc: # fill with accuracy test kwargs -

Running Locally(Option)

Step 1. Add cluster_hosts to config yamls

Modify on every cluster host, commands as following: like DeepSeek-V3.yaml after the configure item

num_nodes, for example:cluster_hosts: ["xxx.xxx.xxx.188", "xxx.xxx.xxx.212"]Step 2. Install develop environment

-

Install vllm-ascend develop packages on every cluster host

cd /vllm-workspace/vllm-ascend python3 -m pip install -r requirements-dev.txt -

Install AISBench on the first host(leader node) in cluster_hosts

export AIS_BENCH_TAG="v3.0-20250930-master" export AIS_BENCH_URL="https://gitee.com/aisbench/benchmark.git" git clone -b ${AIS_BENCH_TAG} --depth 1 ${AIS_BENCH_URL} /vllm-workspace/vllm-ascend/benchmark cd /vllm-workspace/vllm-ascend/benchmark pip install -e . -r requirements/api.txt -r requirements/extra.txt

Step 3. Running test locally

-

Export environments

On leader host(the first node xxx.xxx.xxx.188)

export LWS_WORKER_INDEX=0 export WORKSPACE=/vllm-workspace export CONFIG_YAML_PATH=DeepSeek-V3.yaml export FAIL_TAG=FAIL_TAGOn slave host(other nodes, such as xxx.xxx.xxx.212)

export LWS_WORKER_INDEX=1 export WORKSPACE=/vllm-workspace export CONFIG_YAML_PATH=DeepSeek-V3.yaml export FAIL_TAG=FAIL_TAGLWS_WORKER_INDEXis the index of this node in thecluster_hosts. The node with an index of 0 is the leader. Slave node index value range is [1, num_nodes-1]. -

Run vllm serve instances

Copy and Run run.sh on every cluster host, to start vllm, commands as following:

cp /vllm-workspace/vllm-ascend/tests/e2e/nightly/multi_node/scripts/run.sh /vllm-workspace/ cd /vllm-workspace/ bash -x run.sh

-

-

Add the case to nightly workflow currently, the multi-node test workflow defined in the vllm_ascend_test_nightly_a2/a3.yaml

multi-node-tests: needs: single-node-tests if: always() && (github.event_name == 'schedule' || github.event_name == 'workflow_dispatch') strategy: fail-fast: false max-parallel: 1 matrix: test_config: - name: multi-node-deepseek-pd config_file_path: tests/e2e/nightly/multi_node/config/models/DeepSeek-V3.yaml size: 2 - name: multi-node-qwen3-dp config_file_path: tests/e2e/nightly/multi_node/config/models/Qwen3-235B-A3B.yaml size: 2 - name: multi-node-dpsk-4node-pd config_file_path: tests/e2e/nightly/multi_node/config/models/DeepSeek-R1-W8A8.yaml size: 4 uses: ./.github/workflows/_e2e_nightly_multi_node.yaml with: soc_version: a3 image: m.daocloud.io/quay.io/ascend/cann:8.3.rc2-a3-ubuntu22.04-py3.11 replicas: 1 size: ${{ matrix.test_config.size }} config_file_path: ${{ matrix.test_config.config_file_path }}

The matrix above defines all the parameters required to add a multi-machine use case, The parameters worth paying attention to (I mean if you are adding a new use case) are size and the path to the yaml configuration file. The former defines the number of nodes required for your use case, and the latter defines the path to the configuration file you have completed in step 2.