add pkgs

This commit is contained in:

102

LICENSE.CHN.TXT

Normal file

102

LICENSE.CHN.TXT

Normal file

@@ -0,0 +1,102 @@

|

||||

软件许可协议

|

||||

|

||||

重要须知:本《许可协议》(以下称《协议》)是您(使用本软件的用户)与我公司(昆仑

|

||||

芯(北京)科技有限公司)之间有关本软件产品的法律协议。本“软件产品”包括计算机软件

|

||||

,并可能包括相关媒体、印刷材料和“联机”或电子文档(“软件产品”)。本“软件产品”还

|

||||

包括提供给您的原“软件产品”的任何更新和补充资料。任何与本“软件产品”一同提供给您

|

||||

的相关的软件产品是根据本许可协议中的条款而授予您。如您不同意本《协议》中的条款,

|

||||

请不要安装或使用本软件产品及其相关服务。您一旦安装、使用、复制、下载或以其它方式

|

||||

使用的行为将视为对本协议的接受,并同意接受本协议各项条款的约束。未经我方公司授权

|

||||

,任何拷贝、销售、转让、出租、修改本“软件”的行为均被认为是侵权行为。

|

||||

|

||||

本“软件产品”之著作权及其它知识产权等相关权利或利益(包括但不限于现已取得或未来可

|

||||

取得之著作权、专利权、商标权、营业秘密等)皆为我方公司所有。本“软件产品”受中华人

|

||||

民共和国著作权法及所适用的国际著作权条约和其它知识产权法及条约的保护。

|

||||

|

||||

第一条 许可证的授予。

|

||||

本《协议》授予您下列权利:

|

||||

1、应用软件。您可在单一一台计算机上安装、使用、访问、显示、运行或以其它方式

|

||||

互相作用于(“运行”)本“软件产品”的一份副本。运行“软件产品”的计算机

|

||||

的用户可以制作另一份副本,仅供在其在安装到公司其他电脑管理注册后的

|

||||

同一项目之用。

|

||||

2、储存/网络用途。您还可以在您的计算机上运行“软件产品”,您必须为增加的每个

|

||||

项目获得一份许可证。

|

||||

3、保留权利。未明示授予的一切其它权利均为我公司及其供应商所有。

|

||||

4、如果您是从我公司或其授权被许可人之处获得本软件,那么只要您遵守本协议的所

|

||||

有条款,就可以按其文档描述的方式和目的使用软件。如果软件的设计是为与我公司

|

||||

发布的另一应用程序软件产品(“主程序”)一起使用,并且您拥有我公司提供之使用

|

||||

主程序的有效许可,则我公司授予您与主程序一起使用本软件的非排他性许可。用户

|

||||

仅获得本软件产品的非排他性使用权。

|

||||

|

||||

第二条 限制和义务。

|

||||

1、组件的分隔。本“软件产品”是作为单一产品而被授予使用许可的。您不得将其组成

|

||||

部分分开在多台计算机上使用。

|

||||

2、组件的修改。您不得对许可软件进行任何更改、添加,或基于本软件创作衍生作品。

|

||||

3、不得进行逆向工程。不得全部或部分地翻译、分解、反向编译、反汇编、反向工程

|

||||

或其他试图从许可软件导出程序源代码的行为。

|

||||

4、本《协议》不授予您有关任何本软件产品商标或服务商标的任何权利。不得除掉、

|

||||

掩盖或更改许可软件上有关许可软件著作权或商标的标志。

|

||||

5、不得将许可软件向第三方提供、销售、出租、出借、转让或提供分许可、转许可、

|

||||

通过信息网络传播或以其他形式供他人利用。

|

||||

6、不得限制、破坏或绕过许可软件附带的加密附件或我方提供的其他确保许可软件正

|

||||

确使用的限制性措施。

|

||||

7、支持服务。我公司可能为您提供与“软件产品”有关的支持服务(“支持服务”)。支

|

||||

持服务的使用受用户手册、“联机”文档和/或其它提供的材料中所述的各项政策和计划

|

||||

的制约。提供给您作为支持服务的一部分的任何附加软件代码应被视为本“软件产品”

|

||||

的一部分,并须符合本《协议》中的各项条款和条件。

|

||||

8、终止。如您未遵守本《协议》的各项条款和条件,在不损害其它权利的情况下,我

|

||||

公司可终止本《协议》。如此类情况发生,您必须销毁“软件产品”的所有副本及其所

|

||||

有组成部分。

|

||||

|

||||

第三条 知识产权。

|

||||

1、本“软件产品”(包括但不限于本“软件产品”中所含的任何图像、照片、动画、录像

|

||||

、录音、音乐、文字和附加程序)、随附的印刷材料、及本“软件产品”的任何副本的

|

||||

产权和著作权,均由我公司及其供应商拥有。

|

||||

2、禁止被许可方向任何第三方授予本许可产品全部或部分权力、许可、利益或特权。

|

||||

|

||||

第四条 免责声明。

|

||||

1、本“软件产品”以“现状”方式提供,我公司不保证本软件产品能够或不能够完全满足

|

||||

用户需求,在用户手册、帮助文件、使用说明书等软件文档中的介绍性内容仅供用户

|

||||

参考,不得理解为对用户所做的任何承诺。我公司保留对软件版本进行升级,对功能

|

||||

、内容、结构、界面、运行方式等进行修改或自动更新的权利。

|

||||

2、我公司不对软件进行任何明示或默示担保,包括但不限于适用于特定用途、适销性

|

||||

、可销售品质或不侵犯第三方权利的默示担保。前述责任排除和限制在适用法律的最

|

||||

大允许范围内有效,即使补救措施未能有效发挥作用。

|

||||

|

||||

第五条 责任限制。

|

||||

1、除法律规定不得排除或限制的任何赔偿外,我公司、其关联公司及供应商在任何情

|

||||

况下都不对任何损失、损害、索赔或费用,包括任何间接、相应而生、附带的损失或

|

||||

任何失去的利润或储蓄,或因业务中断、人身伤害或不履行照顾责任或第三方索赔而

|

||||

引致的任何损害承担任何责任,即使我公司代表已被告知出现这种损失、损害、索赔

|

||||

或费用的可能性。无论任何情况下,依照本协议或与本协议有关的我公司、其关联公

|

||||

司以及供应商所承担的集合责任或以其他方式规定的责任均限于购买本软件所支付的

|

||||

款项(如果有)。即使在实质性或严重违反本协议或违反本协议的实质性或重要条款

|

||||

的情况下,本限制仍将适用。我公司代表其关联公司和供应商否认、排除和限制义务

|

||||

、担保和责任,但不在其他方面或为其他目的代表其行事。

|

||||

2、在您所在地的相关法律允许的情况下,前述限制和排除方能适用。本责任限制在某

|

||||

些国家可能无效。您可能依据消费者保护法和其他法律享有不得放弃的权利。我公司

|

||||

不在适用法律允许的范围外限制您所享有的担保或赔偿。

|

||||

|

||||

第六条 出口规则。

|

||||

1、您应遵守所有适用的出口法律、限制或规定。如果本软件按照中国、美国及其他所

|

||||

适用的出口法规被视为出口管制品,您须声明并保证您不是贸易禁运国或受限制国的

|

||||

公民,或没有居住在这些国家,并且您接收本软件不受中国、美国及其他所适用的出

|

||||

口法规的禁止。

|

||||

2、我方不对您承担由于您不遵守出口管制法律、制裁、限制措施和禁运以及本协议约

|

||||

定义务的行为而导致的任何责任。我方保留随时就本条约定对您及其使用相关方审计

|

||||

的权利。如您违反本条约定,我方有权随时不经通知终止本协议,并对给我方造成的

|

||||

一切损失承担责任(包括但不限于经济损失、名誉损失),且应采取充分、必要、有

|

||||

效的措施消除给我方造成的不利影响。

|

||||

|

||||

第七条 其它。

|

||||

1、本协议受中华人民共和国法律的管辖。我公司在法律范围内对本协议享有最终解释

|

||||

权。

|

||||

2、如果不遵守本协议中的条款,您使用本软件的权利将会立即终止。如果发现本协议

|

||||

中有任何规定无法执行,则仅该条规定(且以尽可能小的范围进行释义)将被视为无

|

||||

法执行,而本协议其余部分仍将根据其条款保持有效且应予以执行。第三条、第五条

|

||||

和第六条在本协议终止后继续有效。本协议不会损害用户方的法定权利。本协议是我

|

||||

公司与您之间有关本软件的完整协议,它将取代先前任何与本软件相关的陈述、讨论

|

||||

、承诺、通信或宣传。

|

||||

3、如用户对我公司的解释或修改有异议,应当立即停止使用本软件产品。用户继续使

|

||||

用本软件产品的行为将被视为对我方公司的解释或修改的接受。

|

||||

4、本协议用中英文两种版本书写,如有歧义,以中文版为准。

|

||||

196

LICENSE.ENG.TXT

Normal file

196

LICENSE.ENG.TXT

Normal file

@@ -0,0 +1,196 @@

|

||||

IMPORTANTNOTE: This License Agreement (hereinafter referred to as

|

||||

the "Agreement") is the legal agreement between you (end-user of

|

||||

this software) and our company(Kunlunxin (Beijing) Technology Co.,

|

||||

Ltd.) concerning the Software. This "Software Product" includes computer

|

||||

software and may include relatedmedia, printed material, and "online" or

|

||||

electronic documentation(the "Software Product"). This "Software Product"

|

||||

also includes any updates and supplements to your original "Software

|

||||

Product". Any associated softwareproducts provided to you with this

|

||||

Software Product is granted to you inaccordance with the terms of this

|

||||

License Agreement. If you do not agree to the terms of this Agreement,

|

||||

please do not install or use the Software Product and its associated

|

||||

services. Your installation, use, copying, downloading or other use will

|

||||

be deemed an acceptance of this Agreement and you agree to be bound by

|

||||

the terms of this Agreement. Any act of copying, selling, transferring,

|

||||

rentingor modifying the Software without our authorization is considered

|

||||

an infringement.

|

||||

|

||||

The copyright and other intellectual property rights or interests of the

|

||||

"Software Product" (including but not limited to the copyrights, patent

|

||||

rights, trademark rights, trade secrets, etc. that have been or may be

|

||||

obtained in the future) are owned by our company. This Software Product

|

||||

is protected by the Copyright Law of the People's Republic of China

|

||||

and applicable international copyright treaties and other intellectual

|

||||

property laws and treaties.

|

||||

|

||||

Article 1 Grant of License.

|

||||

This Agreement grants you the followingrights:

|

||||

1. Application software. You may install,use, access, display,

|

||||

run or otherwise interact ("run") with a copy of the Software

|

||||

Product on a single computer. The user of the computer running

|

||||

the Software Product may make another copy only for the same

|

||||

project after it has been installed on another company computer.

|

||||

|

||||

2. Storage/network use. You can also run Software Products on

|

||||

your computer, and you must obtain a license for each item added.

|

||||

|

||||

3. Rights reserved. All other rights not expressly granted are

|

||||

the property of us and our suppliers.

|

||||

|

||||

4. If you obtained the Software from our company or its authorized

|

||||

licensee, you may use the Software in the manner and for the

|

||||

purpose described in its documentation, as long as you comply

|

||||

with all the terms of this Agreement. If the Software is designed

|

||||

to be used with another application software product (the "Main

|

||||

Program") released by us and you have a valid license to use

|

||||

the Main Program, we grant you a non-exclusive license to use

|

||||

the Software with the Main Program. Users are only entitled to

|

||||

non-exclusive use of this software product.

|

||||

|

||||

Article 2 Restrictions and Obligations.

|

||||

1. Separation of components. This Software Product is licensed

|

||||

as a single product. You must not use its components separately

|

||||

on more than one computer.

|

||||

|

||||

2. Modification of components. You may not make any changes or

|

||||

additions to the licensed software, or create derivatives based

|

||||

on the software.

|

||||

|

||||

3. Reverse engineering is not allowed. Do not translate,

|

||||

disassemble, decompile,disassemble, reverse engineer, or

|

||||

otherwise attempt to export the program source code from the

|

||||

Licensed Software, in whole or in part.

|

||||

|

||||

4. This Agreement does not grant you any rights with respect

|

||||

to any trademarks or service marks of the Software Product. No

|

||||

marks relating to the copyright or trademark of the licensed

|

||||

software shall be removed, covered up or altered.

|

||||

|

||||

5. The licensed software shall not be provided, sold, leased,

|

||||

lent, transferred or sub-licensed, transmitted through information

|

||||

network or used by others in other forms.

|

||||

|

||||

6. Do not restrict, destroy or bypass the encrypted attachments

|

||||

attached to the Licensed Software or other restrictive measures

|

||||

provided by us to ensure the proper use of the Licensed Software.

|

||||

|

||||

7. Supporting services. We may provide you with support services

|

||||

related to the Software Product ("Support Services"). The use

|

||||

of Support Services is governed by the policies and programs

|

||||

described in the User Manual, the Online documentation, and/or

|

||||

other materials provided. Any additional software code provided

|

||||

to you as part of the Support Services shall be regarded as a

|

||||

part of this Software Product and must comply with the terms

|

||||

and conditions of this Agreement.

|

||||

|

||||

8. Termination. Without prejudice to any other rights, we may

|

||||

terminate this Agreement if you fail to comply with the terms and

|

||||

conditions of this Agreement. If this happens, you must destroy

|

||||

all copies of the Software Product and all of its components.

|

||||

|

||||

Article 3 Intellectual Property Rights.

|

||||

1. The property rights and copyrights of the Software Product

|

||||

(including but not limited to any images, photos, animations,

|

||||

video recordings, audio recordings, music, texts and additional

|

||||

programs included in the Software Product), the accompanying

|

||||

printed materials, and any copies of the Software Product are

|

||||

owned by our company and its suppliers.

|

||||

|

||||

2. Licensee is prohibited from granting all or part of the rights,

|

||||

licenses,benefits or privileges of the Licensed Products to any

|

||||

third party.

|

||||

|

||||

Article 4 Disclaimer.

|

||||

1. The "software product" is provided in the "as-is" mode. Our

|

||||

company does not guarantee that the software product can or cannot

|

||||

fully meet the user's requirements. The introductory contents

|

||||

in the user manual, help documents, operating instructions and

|

||||

other software documents are only for user's reference, and

|

||||

shall not be construed as any commitment made to the user. Our

|

||||

company reserves the right to upgrade the software version,

|

||||

modify or automatically update the function, content, structure,

|

||||

interface and operation mode.

|

||||

|

||||

2. Our company does not make any express or implied warranty on the

|

||||

software,including but not limited to the implied warranty that

|

||||

the software is suitablefor specific purpose, merchantability,

|

||||

marketable quality or does not infringethe rights of third

|

||||

parties. The foregoing exclusions and limitations ofliability

|

||||

shall be effective to the fullest extent permitted by applicable

|

||||

law,even if remedies do not function effectively.

|

||||

|

||||

Article 5 Limitation of Liability.

|

||||

1. Except for any indemnity which shall not be excluded or

|

||||

limited by law, we, its affiliates and suppliers shall under no

|

||||

circumstances be liable for any loss,damage, claim or expense,

|

||||

including any indirect, consequential, incidental loss or any

|

||||

lost profits or savings, or any damages arising frombusiness

|

||||

interruption personal injury or non-performance of the duty of

|

||||

care or 3rd party claims, even though our representative has

|

||||

been informed of the possibility of such loss, damage, claims

|

||||

or expenses. In any event, the aggregate liability or otherwise

|

||||

imposed upon us, its affiliates and supplier spursuant to or in

|

||||

connection with this Agreement shall be limited to the amount(if

|

||||

any) paid for the purchase of the Software. This restriction shall

|

||||

apply even in the event of a material or substantial breach of

|

||||

this Agreement or abreach of a material or significant provision

|

||||

of this Agreement. We disclaim,exclude and limit our obligations,

|

||||

warranties and liabilities on behalf of our affiliatesand

|

||||

suppliers, but do not act otherwise or for any other purpose.

|

||||

|

||||

2. The foregoing limitations and exclusions shall apply to the

|

||||

extent permitted by the relevant laws of your location. This

|

||||

limitation of liability may be void in certain countries. You may

|

||||

have rights that may not be waived under consumer protection laws

|

||||

and other laws. We do not limit your warranties or indemnities

|

||||

to the extent permitted by applicable law.

|

||||

|

||||

Article 6 Export Rules.

|

||||

1. You shall comply with all applicable export laws, restrictions

|

||||

or regulations. If the Software is considered export-controlled

|

||||

in accordance with China, the United States and other applicable

|

||||

export regulations, you must represent and warrant that you

|

||||

are not a citizen of, or resident in, a trade embargoed or

|

||||

restricted country,and that your accept the Software is not

|

||||

prohibited by export laws and regulations of China, the United

|

||||

States and other applicable laws and regulations.

|

||||

|

||||

2. We shall not be liable to you for any liability arising

|

||||

from your non-compliance with export control laws, sanctions,

|

||||

restrictions and embargoes, as well as your obligations under

|

||||

this Agreement. We reserve the right to audit you and the parties

|

||||

involved in your use of this Agreement at any time. In the event

|

||||

that you violate this agreement, we have the right to terminate

|

||||

this Agreement at any time without notice, and you shall bear

|

||||

the responsibility for all losses (including but not limited

|

||||

to economic loss and reputation loss) caused to us, and take

|

||||

sufficient, necessary and effective measures to eliminate the

|

||||

adverse effects caused to us.

|

||||

|

||||

Article 7 Others.

|

||||

1. This Agreement shall be governed by the laws of the People's

|

||||

Republic of China. We have the right of final interpretation of

|

||||

this Agreement within the scope of law.

|

||||

|

||||

2. If you do not comply with the terms of this Agreement,

|

||||

your right to use the Software will terminate immediately. If

|

||||

any provision of this Agreement is found unenforceable, only

|

||||

that provision (and shall be construed to the minimum extent

|

||||

possible) shall be deemed unenforceable, and the remainder of this

|

||||

Agreement shall remain in force and effect in accordance with its

|

||||

terms. Articles 3, 5 and 6 shall survive after the termination

|

||||

of this Agreement. This Agreement shall not prejudice the legal

|

||||

rights of the User. This Agreement is the entire agreement

|

||||

between us and you with respect to the Software and supersedes

|

||||

any prior representations, discussions, promises, communications

|

||||

or publicity relating to the Software.

|

||||

|

||||

3. If the user disagrees with the interpretation or modification

|

||||

of our company, the user shall immediately stop using the

|

||||

software product. User's continued use of the Software Product

|

||||

shall be deemed as acceptance of our Company's interpretation

|

||||

or modification.

|

||||

|

||||

4. This Agreement is written in both Chinese and English. In

|

||||

case of any ambiguity, the Chinese version shall prevail.

|

||||

|

||||

81

README.md

81

README.md

@@ -1,2 +1,81 @@

|

||||

# r200_8f_xtrt_llm

|

||||

=============

|

||||

版本号:v0.5.3

|

||||

发布时间:2024.02.01

|

||||

|

||||

v0.5.3 版产品特性:

|

||||

- 完善 Continuous Batching功能,并在外部客户场景验证了性能与精度正确性

|

||||

- 新增 Paged Attention功能

|

||||

- 新增 pipeline parallel模式,已验证 llama系列模型

|

||||

- 进一步优化llama、baichuan、chatglm模型性能。包括:优化显存分配方案,进一步提高最大batch_size;使用FA减小内存占用等方案。

|

||||

- 极大提高了编译模型的速度

|

||||

- 新增 smooth quant功能,已在 llama系列、qwen系列、bloom 等开源模型上验证了正确性

|

||||

- 验证了 QWen-72b模型的精度正确性,支持float16、int8以及分布式功能

|

||||

|

||||

v0.5.3 bug fix:

|

||||

- llama系列模型,在定长、多batch下的精度问题

|

||||

- 变长的精度问题

|

||||

|

||||

v0.5.3 已知问题:

|

||||

- 不支持 float32模型精度,需要自行转到 float16

|

||||

|

||||

下版本规划:

|

||||

- 初版 cpp runtime

|

||||

- 进一步强化重点客户关注的通用Feature

|

||||

|

||||

发版链接和Docker

|

||||

- [XTRT-LLM产出](https://klx-sdk-release-public.su.bcebos.com/xtrt_llm/release/v0.5.3/output.tar.gz)

|

||||

- Ubuntu Docker: docker pull iregistry.baidu-int.com/isa/xtcl_ubuntu2004:v4.3

|

||||

|

||||

|

||||

=============

|

||||

版本号:v0.5.2.2

|

||||

发布时间:2024.01.26

|

||||

|

||||

v0.5.2.2版产品特性

|

||||

- 统一了XTRT和XPyTorch的底层依赖模块

|

||||

- 修复了若干已知问题

|

||||

|

||||

发版链接和Docker

|

||||

- [XTRT-LLM产出](https://klx-sdk-release-public.su.bcebos.com/xtrt_llm/release/v0.5.2.2/output.tar.gz)

|

||||

- Ubuntu Docker: docker pull iregistry.baidu-int.com/isa/xtcl_ubuntu2004:v4.3

|

||||

|

||||

|

||||

=============

|

||||

版本号:v0.5.2

|

||||

发布时间:2023.12.28

|

||||

|

||||

v0.5.2版产品特性

|

||||

- 验证了Baichuan2-7B, Baichuan2-13B模型的正确性,支持FP16和INT8分布式功能,支持了Baichuan-13B的分布式运行

|

||||

- 验证了Qwen-7B, Qwen-14B模型的正确性,支持FP16和INT8分布式功能

|

||||

- 验证了ChatGLM-6B模型的正确性,支持FP16和INT8功能

|

||||

- 验证了Bloom模型的正确性,支持FP16和INT8分布式功能

|

||||

- 验证了GPT-Neox-20B模型的正确性,支持FP16和INT8分布式功能

|

||||

- 增加运行时Memory Cache和分桶算法,提升首字延迟性能

|

||||

- 框架层面增加服务调度功能,完成Continuous Batching的初版Demo

|

||||

|

||||

下版本规划

|

||||

- 完整支持Continuous Batching,Remove Padding功能

|

||||

- 接入外部客户的大模型验证,交付等实际项目,开发重点客户关注的通用Feature

|

||||

- 模型适配KL3

|

||||

|

||||

|

||||

=============

|

||||

版本号:v0.5.1

|

||||

发布时间:2023.12.7

|

||||

|

||||

使用场景

|

||||

XTRT-LLM在如下场景下为前场同学提供帮助与支持

|

||||

- 如客户当前使用TensorRT-LLM进行GPU模型的推理与部署,XTRT-LLM可快速完成迁移与适配,提供高性能版本的XPU推理能力,降低客户对接成本

|

||||

- 如客户指定开源LLM进行POC和性能PK,对于XTRT-LLM已经验证支持的模型,可直接加载Huggingface上的公版权重,进行高性能版本的模型推理

|

||||

|

||||

v0.5.1版产品特性

|

||||

- 实现并对齐了Nvidia TensorRT-LLM v0.5版本的基础数据结构,完成了核心功能的验证,兼容TensorRT-LLM的Python前端组网

|

||||

- 验证了LLama-7B, LLama-13B, LLama-65B和LLama2-70B全系模型的正确性,支持FP16和INT8分布式功能

|

||||

- 验证了Baichuan-7B, Baichuan-13B模型的正确性,支持FP16和INT8功能

|

||||

- 验证了ChatGLM2-6B, ChatGLM3-6B模型的正确性,支持FP16和INT8功能

|

||||

- 验证了GPT-J模型的正确性,支持FP16和INT8功能

|

||||

|

||||

下版本规划

|

||||

- 整体支持10+个大模型,进一步优化模型性能,下一版仍以月粒度发版

|

||||

- 逐步接入外部客户的大模型验证,交付等实际项目,并开发客户关注的Feature

|

||||

- 模型适配KL3

|

||||

|

||||

96

doc/UserGuide.md

Normal file

96

doc/UserGuide.md

Normal file

@@ -0,0 +1,96 @@

|

||||

# 昆仑芯XTRT-LLM使用指南

|

||||

|

||||

## 产品整体定位

|

||||

|

||||

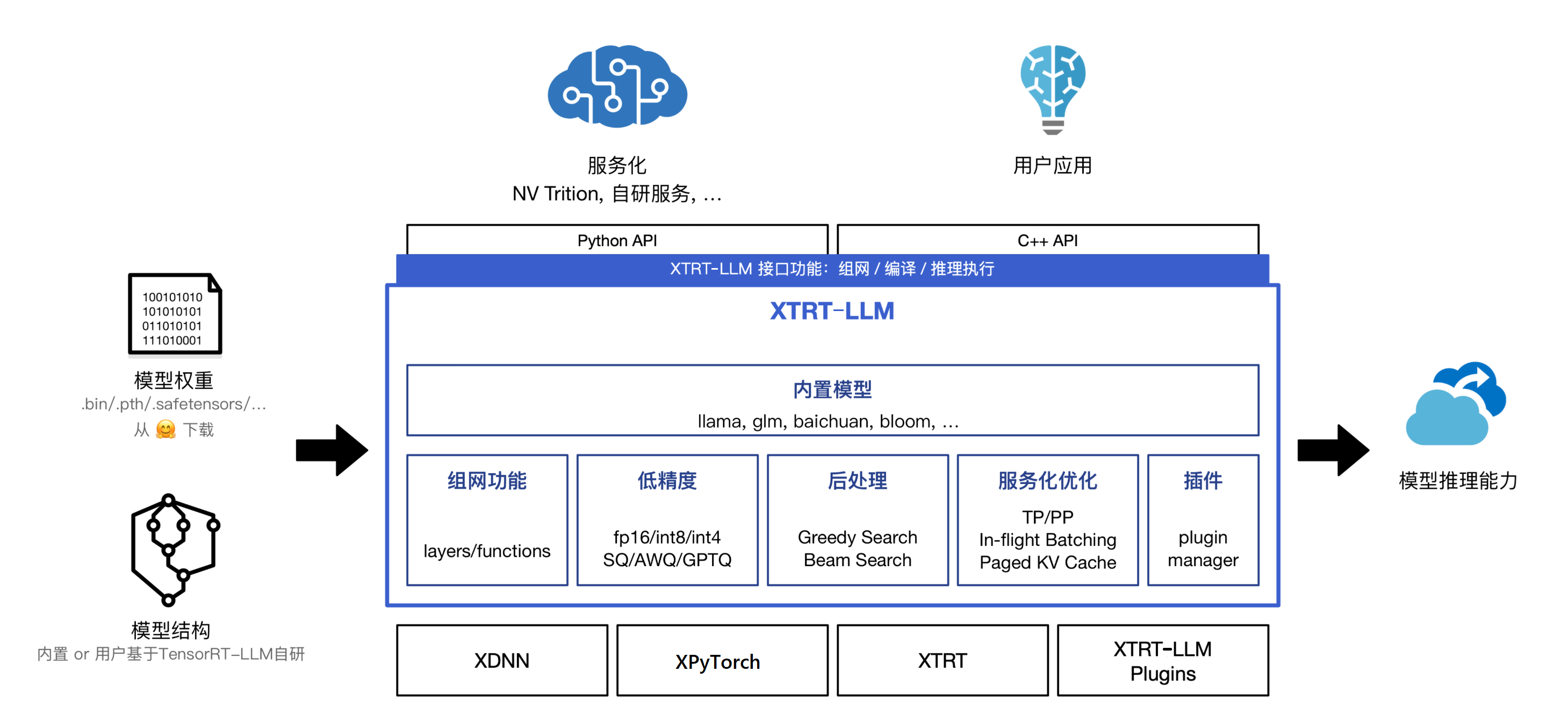

XTRT—LLM产品定位是快速对齐Nvidia的大模型产品,在不改或者少改几行代码的情况下,能够做到完全兼容现有TensorRT-LLM产品;以模块化、Python组网的方式来支持大模型的推理。保证用户在使用上不会感知底层运行时的不同,只需关注模型结构和对应的算法本身,通过简单配置就可以实现单卡和单机多卡分布式两种推理运行方式,也符合算法工程师普遍的使用习惯。下图是`XTRT-LLM整体架构图`:

|

||||

|

||||

|

||||

|

||||

## 使用场景

|

||||

XTRT-LLM可提供以下场景的支持:

|

||||

- 在已经使用TensorRT-LLM进行GPU模型的推理与部署的情况下,XTRT-LLM可支持用户快速完成模型的迁移与适配,提供高性能版本的XPU推理能力,降低对接成本。

|

||||

- 在指定开源LLM进行部署和性能测试情况下,对于XTRT-LLM已经验证支持的模型,可直接加载Huggingface上的公版权重,进行高性能版本的模型推理。

|

||||

|

||||

## 环境搭建与Demo

|

||||

XTRT-LLM的环境搭建需要下载对应的Docker环境以及XTRT-LLM的产出来搭建大模型的运行环境。大模型的运行需要有编译和运行两个阶段。每个模型的具体运行会略有差异,详细的模型运行步骤可以参考对应模型目录下的README.md文件,这里以LLama-7B模型的单卡运行举例

|

||||

|

||||

### 环境搭建

|

||||

- 下载Docker image并启动Docker

|

||||

|

||||

```bash

|

||||

# 下载Docker image

|

||||

docker pull iregistry.baidu-int.com/isa/xtcl_ubuntu2004:v4.3

|

||||

|

||||

# 启动Docker

|

||||

sudo docker run -it

|

||||

--net=host

|

||||

--cap-add=SYS_PTRACE

|

||||

--device=/dev/xpu0:/dev/xpu0 --device=/dev/xpu1:/dev/xpu1 #根据实际的昆仑芯卡设备数选择--device映射的数目**

|

||||

--device=/dev/xpu2:/dev/xpu2 --device=/dev/xpu3:/dev/xpu3

|

||||

--device=/dev/xpu4:/dev/xpu4 --device=/dev/xpu5:/dev/xpu5

|

||||

--device=/dev/xpu6:/dev/xpu6 --device=/dev/xpu7:/dev/xpu7

|

||||

--device=/dev/xpuctrl:/dev/xpuctrl

|

||||

--name xtrt_llm

|

||||

-v /宿主机路径:/容器路径

|

||||

-w /容器工作路径

|

||||

iregistry.baidu-int.com/isa/xtcl_ubuntu2004:v4.3 /bin/bash

|

||||

```

|

||||

|

||||

- 下载XTRT-LLM产出包并解压

|

||||

|

||||

```bash

|

||||

# 去除代理

|

||||

unset http_proxy https_proxy

|

||||

# 下载产出包

|

||||

wget https://klx-sdk-release-public.su.bcebos.com/xtrt_llm/release/v0.5.3/output.tar.gz && tar -zxf output.tar.gz

|

||||

cd output/

|

||||

```

|

||||

|

||||

- 设置代理

|

||||

|

||||

根据实际网络场景,设置`scripts/install_release.sh`脚本中代理配置

|

||||

```bash

|

||||

set_proxy() {

|

||||

export http_proxy=xxx

|

||||

export https_proxy=xxx

|

||||

}

|

||||

```

|

||||

|

||||

- 切换到运行环境

|

||||

|

||||

```bash

|

||||

source /home/pt201/bin/activate

|

||||

bash scripts/install_release.sh

|

||||

source scripts/set_release_env.sh

|

||||

```

|

||||

|

||||

### 运行模型

|

||||

|

||||

- 下载模型权重

|

||||

|

||||

以llama-7b为例

|

||||

```bash

|

||||

cd examples/llama

|

||||

bash ../../scripts/download_model.sh llama-7b

|

||||

# bash ../../scripts/download_model.sh <模型名称>

|

||||

# 当前支持的模型有: llama-7b, llama-13b, llama-65b, llama2-70b, chatglm-6b, chatglm2-6b, chatglm3-6b, baichuan-7b, baichuan-13b, baichuan2-7b, baichuan2-13b, bloom, gpt-neox-20b, qwen-7b, qwen-14b, qwen-72b and gptj-6b

|

||||

```

|

||||

下载的模型权重默认存放在 `examples` 目录下各模型对应的文件夹下的`downloads`,例如`examples/llama/downloads`

|

||||

|

||||

- 编译模型

|

||||

|

||||

模型具体的build命令请参考 `examples` 目录下各模型对应的`README.md`或`README_CN.md`

|

||||

根据需要添加build.py中的配置参数,该过程需要几分钟的时间,每次修改参数都需要重新执行编译过程。

|

||||

```bash

|

||||

python3 build.py --model_dir ./downloads/llama-7b-hf/ \

|

||||

--dtype float16 \

|

||||

--use_gpt_attention_plugin float16 \

|

||||

--output_dir ./downloads/llama-7b-hf/trt_engines/fp16/1-XPU/

|

||||

```

|

||||

|

||||

- 运行模型

|

||||

|

||||

模型具体的run命令请参考 `examples` 目录下各模型对应的`README.md`或`README_CN.md`

|

||||

```bash

|

||||

python3 run.py --engine_dir ./downloads/llama-7b-hf/trt_engines/fp16/1-XPU/ --max_output_len 128 --tokenizer_dir ./downloads/llama-7b-hf/

|

||||

```

|

||||

0

examples/__init__.py

Normal file

0

examples/__init__.py

Normal file

BIN

examples/__pycache__/utils.cpython-38.pyc

Normal file

BIN

examples/__pycache__/utils.cpython-38.pyc

Normal file

Binary file not shown.

146

examples/baichuan/README.md

Normal file

146

examples/baichuan/README.md

Normal file

@@ -0,0 +1,146 @@

|

||||

# Baichuan

|

||||

|

||||

This document shows how to build and run a Baichuan models (including `v1_7b`/`v1_13b`/`v2_7b`/`v2_13b`) in XTRT-LLM on both single XPU and single node multi-XPU.

|

||||

|

||||

## Overview

|

||||

|

||||

The XTRT-LLM Baichuan example code is located in [`examples/baichuan`](./). There are several main files in that folder:

|

||||

|

||||

* [`build.py`](./build.py) to build the XTRT engine(s) needed to run the Baichuan model,

|

||||

* [`run.py`](./run.py) to run the inference on an input text,

|

||||

|

||||

These scripts accept an argument named model_version, whose value should be `v1_7b`/`v1_13b`/`v2_7b`/`v2_13b` and the default value is `v1_13b`.

|

||||

|

||||

## Support Matrix

|

||||

* FP16

|

||||

* INT4 & INT8 Weight-Only

|

||||

|

||||

## Usage

|

||||

|

||||

The XTRT-LLM Baichuan example code locates at [examples/baichuan](./). It takes HF weights as input, and builds the corresponding XTRT engines. The number of XTRT engines depends on the number of XPUs used to run inference.

|

||||

|

||||

### Build XTRT engine(s)

|

||||

|

||||

Need to specify the HF Baichuan checkpoint path. For `v1_13b`, you should use whether [./downloads/baichuan-13b](./downloads/baichuan-13b) or [baichuan-inc/Baichuan-13B-Base](https://huggingface.co/baichuan-inc/Baichuan-13B-Base). For `v2_13b`, you should use whether [baichuan-inc/Baichuan2-13B-Chat](https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat) or [baichuan-inc/Baichuan2-13B-Base](https://huggingface.co/baichuan-inc/Baichuan2-13B-Base). More Baichuan models could be found on [baichuan-inc](https://huggingface.co/baichuan-inc).

|

||||

|

||||

XTRT-LLM Baichuan builds XTRT engine(s) from HF checkpoint. If no checkpoint directory is specified, XTRT-LLM will build engine(s) with dummy weights.

|

||||

|

||||

Normally `build.py` only requires single XPU, but if you've already got all the XPUs needed while inferencing, you could enable parallelly building to make the engine building process faster by adding `--parallel_build` argument. Please note that currently `parallel_build` feature only supports single node.

|

||||

|

||||

Here're some examples that take `v1_13b` as example(`v1_7b`, `v2_7b`, `v2_13b` are supported):

|

||||

|

||||

```bash

|

||||

|

||||

# Build the Baichuan V1 13B model using a single XPU and FP16.

|

||||

python build.py --model_version v1_13b \

|

||||

--model_dir ./downloads/baichuan-13b \

|

||||

--dtype float16 \

|

||||

--use_gemm_plugin float16 \

|

||||

--use_gpt_attention_plugin float16 \

|

||||

--output_dir ./downloads/baichuan-13b/fp16/tp1

|

||||

|

||||

# Build the Baichuan V1 13B model using a single XPU and apply INT8 weight-only quantization.

|

||||

python build.py --model_version v1_13b \

|

||||

--model_dir ./downloads/baichuan-13b \

|

||||

--dtype float16 \

|

||||

--use_gemm_plugin float16 \

|

||||

--use_gpt_attention_plugin float16 \

|

||||

--use_weight_only \

|

||||

--output_dir ./downloads/baichuan-13b/int8/tp1

|

||||

|

||||

# Build the Baichuan V1 13B model using a single GPU and apply INT4 weight-only quantization.

|

||||

python build.py --model_version v1_13b \

|

||||

--model_dir baichuan-inc/Baichuan-13B-Chat \

|

||||

--dtype float16 \

|

||||

--use_gemm_plugin float16 \

|

||||

--use_gpt_attention_plugin float16 \

|

||||

--use_weight_only \

|

||||

--weight_only_precision int4 \

|

||||

--output_dir ./tmp/baichuan_v1_13b/trt_engines/int4_weight_only/1-gpu/

|

||||

|

||||

# Build Baichuan V1 13B using 2-way tensor parallelism and FP16.

|

||||

python build.py --model_version v1_13b \

|

||||

--model_dir ./downloads/baichuan-13b \

|

||||

--dtype float16 \

|

||||

--use_gemm_plugin float16 \

|

||||

--use_gpt_attention_plugin float16 \

|

||||

--output_dir ./downloads/baichuan-13b/fp16/tp2 \

|

||||

--parallel_build \

|

||||

--world_size 2

|

||||

|

||||

# Build Baichuan V1 13B using 2-way tensor parallelism and apply INT8 weight-only quantization.

|

||||

python build.py --model_version v1_13b \

|

||||

--model_dir ./downloads/baichuan-13b \

|

||||

--dtype float16 \

|

||||

--use_gemm_plugin float16 \

|

||||

--use_gpt_attention_plugin float16 \

|

||||

--use_weight_only \

|

||||

--output_dir ./downloads/baichuan-13b/int8/tp2 \

|

||||

--parallel_build \

|

||||

--world_size 2

|

||||

|

||||

|

||||

```

|

||||

### Run

|

||||

|

||||

Before running the examples, make sure set the environment variables:

|

||||

|

||||

```bash

|

||||

export PYTORCH_NO_XPU_MEMORY_CACHING=0 # disable XPytorch cache XPU memory.

|

||||

export XMLIR_D_XPU_L3_SIZE=0 # disable XPytorch use L3.

|

||||

```

|

||||

|

||||

If you are runing with multiple XPUs and no L3 space, you can set `BKCL_CCIX_BUFFER_GM=1` to disable L3.

|

||||

|

||||

To run a XTRT-LLM Baichuan model using the engines generated by `build.py`. Here're some examples:

|

||||

|

||||

```bash

|

||||

# Generate summarization for a given input text

|

||||

python summarize.py --model_version v2_13b \

|

||||

--hf_model_location ./downloads/baichuan2-13b \

|

||||

--engine_dir ./downloads/baichuan2-13b/fp16/tp1/ \

|

||||

--log_level info

|

||||

|

||||

# With fp16 inference

|

||||

python run.py --model_version v1_13b \

|

||||

--max_output_len=50 \

|

||||

--tokenizer_dir ./downloads/baichuan-13b \

|

||||

--log_level=info \

|

||||

--engine_dir=./downloads/baichuan-13b/fp16/tp1

|

||||

|

||||

# With INT8 weight-only quantization inference

|

||||

python run.py --model_version v1_13b \

|

||||

--max_output_len=50 \

|

||||

--tokenizer_dir=./downloads/baichuan-13b \

|

||||

--log_level=info \

|

||||

--engine_dir=./downloads/baichuan-13b/int8/tp1

|

||||

|

||||

# With INT4 weight-only quantization inference

|

||||

python run.py --model_version v1_13b \

|

||||

--max_output_len=50 \

|

||||

--tokenizer_dir=baichuan-inc/Baichuan-13B-Chat \

|

||||

--engine_dir=./tmp/baichuan_v1_13b/trt_engines/int4_weight_only/1-gpu/

|

||||

|

||||

# with fp16 and 2-way tensor parallelism inference

|

||||

mpirun -n 2 --allow-run-as-root \

|

||||

python run.py --model_version v1_13b \

|

||||

--max_output_len=50 \

|

||||

--tokenizer_dir=./downloads/baichuan-13b \

|

||||

--log_level=info \

|

||||

--engine_dir=./downloads/baichuan-13b/fp16/tp2

|

||||

|

||||

# with INT8 weight-only and 2-way tensor parallelism inference

|

||||

mpirun -n 2 --allow-run-as-root \

|

||||

python run.py --model_version v1_13b \

|

||||

--max_output_len=50 \

|

||||

--tokenizer_dir=./downloads/baichuan-13b \

|

||||

--log_level=info \

|

||||

--engine_dir=./downloads/baichuan-13b/int8/tp2

|

||||

|

||||

```

|

||||

|

||||

### Known Issues

|

||||

|

||||

* The implementation of the Baichuan-7B model with INT8 Weight-Only and Tensor

|

||||

Parallelism greater than 2 might have accuracy issues. It is under

|

||||

investigation.

|

||||

127

examples/baichuan/README_CN.md

Normal file

127

examples/baichuan/README_CN.md

Normal file

@@ -0,0 +1,127 @@

|

||||

# Baichuan

|

||||

|

||||

本文档介绍了如何使用昆仑芯XTRT-LLM在单XPU和单节点多XPU上构建和运行百川(Baichuan)模型(包括`v1_7b`/`v1_13b`/`v2_7b`/`v2_13b`)。

|

||||

|

||||

## 概述

|

||||

|

||||

XTRT-LLM Baichuan示例代码位于 [`examples/baichuan`](./). 此文件夹中有以下几个主要文件:

|

||||

|

||||

* [`build.py`](./build.py) 构建运行Baichuan模型所需的XTRT引擎

|

||||

* [`run.py`](./run.py) 基于输入的文字进行推理

|

||||

|

||||

这些脚本接收一个名为model_version的参数,其值应为 `v1_7b`/`v1_13b`/`v2_7b`/`v2_13b` ,其默认值为 `v1_13b`。

|

||||

|

||||

## 支持的矩阵

|

||||

|

||||

* FP16

|

||||

* INT8 Weight-Only

|

||||

|

||||

## 使用说明

|

||||

|

||||

XTRT-LLM Baichuan示例代码位于 [`examples/baichuan`](./)。它使用HF权重作为输入,并且构建对应的XTRT引擎。XTRT引擎的数量取决于为了运行推理而使用的XPU个数。

|

||||

|

||||

### 构建XTRT引擎

|

||||

|

||||

需要明确HF Baichuan checkpoint的路径。对于`v1_13b`,应该使用 [./downloads/baichuan-13b](./downloads/baichuan-13b) 或者 [baichuan-inc/Baichuan-13B-Base](https://huggingface.co/baichuan-inc/Baichuan-13B-Base).对于`v2_13b`,应该使用 [baichuan-inc/Baichuan2-13B-Chat](https://huggingface.co/baichuan-inc/Baichuan2-13B-Chat)或者 [baichuan-inc/Baichuan2-13B-Base](https://huggingface.co/baichuan-inc/Baichuan2-13B-Base)。更多的Baichuan模型可见 [baichuan-inc](https://huggingface.co/baichuan-inc)。

|

||||

|

||||

XTRT-LLM Baichuan从HF checkpoint构建XTRT引擎。如果未指定checkpoint目录,XTRT-LLM将使用伪权重构建引擎。

|

||||

|

||||

通常`build.py`只需要一个XPU,但如果您在推理时已经获得了所需的所有XPU,则可以通过添加`--parallel_build`参数来启用并行构建,从而加快引擎构建过程。请注意,当前并行构建功能仅支持单个节点。

|

||||

|

||||

以下是一些以`v1_13b`为例的示例(亦支持`v1_7b`、`v2_7b`和`v2_13b`):

|

||||

|

||||

```bash

|

||||

# Build the Baichuan V1 13B model using a single XPU and FP16.

|

||||

python build.py --model_version v1_13b \

|

||||

--model_dir ./downloads/baichuan-13b \

|

||||

--dtype float16 \

|

||||

--use_gemm_plugin float16 \

|

||||

--use_gpt_attention_plugin float16 \

|

||||

--output_dir ./downloads/baichuan-13b/fp16/tp1

|

||||

|

||||

# Build the Baichuan V1 13B model using a single XPU and apply INT8 weight-only quantization.

|

||||

python build.py --model_version v1_13b \

|

||||

--model_dir ./downloads/baichuan-13b \

|

||||

--dtype float16 \

|

||||

--use_gemm_plugin float16 \

|

||||

--use_gpt_attention_plugin float16 \

|

||||

--use_weight_only \

|

||||

--output_dir ./downloads/baichuan-13b/int8/tp1

|

||||

|

||||

# Build Baichuan V1 13B using 2-way tensor parallelism and FP16.

|

||||

python build.py --model_version v1_13b \

|

||||

--model_dir ./downloads/baichuan-13b \

|

||||

--dtype float16 \

|

||||

--use_gemm_plugin float16 \

|

||||

--use_gpt_attention_plugin float16 \

|

||||

--output_dir ./downloads/baichuan-13b/fp16/tp2 \

|

||||

--parallel_build \

|

||||

--world_size 2

|

||||

|

||||

# Build Baichuan V1 13B using 2-way tensor parallelism and apply INT8 weight-only quantization.

|

||||

python build.py --model_version v1_13b \

|

||||

--model_dir ./downloads/baichuan-13b \

|

||||

--dtype float16 \

|

||||

--use_gemm_plugin float16 \

|

||||

--use_gpt_attention_plugin float16 \

|

||||

--use_weight_only \

|

||||

--output_dir ./downloads/baichuan-13b/int8/tp2 \

|

||||

--parallel_build \

|

||||

--world_size 2

|

||||

|

||||

```

|

||||

|

||||

|

||||

|

||||

### 运行

|

||||

|

||||

在运行示例之前,请确保设置环境变量:

|

||||

|

||||

```bash

|

||||

export PYTORCH_NO_XPU_MEMORY_CACHING=0 # disable XPytorch cache XPU memory.

|

||||

export XMLIR_D_XPU_L3_SIZE=0 # disable XPytorch use L3.

|

||||

```

|

||||

|

||||

如果使用多个XPU且没有L3空间运行,则可以通过设置`BKCL_CCIX_BUFFER_GM=1`以禁用L3。

|

||||

|

||||

使用`build.py`生成的引擎运行XTRT-LLM Baichuan模型:

|

||||

|

||||

```bash

|

||||

# With fp16 inference

|

||||

python run.py --model_version v1_13b \

|

||||

--max_output_len=50 \

|

||||

--tokenizer_dir ./downloads/baichuan-13b \

|

||||

--log_level=info \

|

||||

--engine_dir=./downloads/baichuan-13b/fp16/tp1

|

||||

|

||||

# With INT8 weight-only quantization inference

|

||||

python run.py --model_version v1_13b \

|

||||

--max_output_len=50 \

|

||||

--tokenizer_dir=./downloads/baichuan-13b \

|

||||

--log_level=info \

|

||||

--engine_dir=./downloads/baichuan-13b/int8/tp1

|

||||

|

||||

# with fp16 and 2-way tensor parallelism inference

|

||||

mpirun -n 2 --allow-run-as-root \

|

||||

python run.py --model_version v1_13b \

|

||||

--max_output_len=50 \

|

||||

--tokenizer_dir=./downloads/baichuan-13b \

|

||||

--log_level=info \

|

||||

--engine_dir=./downloads/baichuan-13b/fp16/tp2

|

||||

|

||||

# with INT8 weight-only and 2-way tensor parallelism inference

|

||||

mpirun -n 2 --allow-run-as-root \

|

||||

python run.py --model_version v1_13b \

|

||||

--max_output_len=50 \

|

||||

--tokenizer_dir=./downloads/baichuan-13b \

|

||||

--log_level=info \

|

||||

--engine_dir=./downloads/baichuan-13b/int8/tp2

|

||||

|

||||

```

|

||||

|

||||

### 已知问题

|

||||

|

||||

- 采用仅使用INT8权重和大于2的Tensor Parallelism的Baichuan-7B模型的实现可能存在精度问题。此问题正在调查中。

|

||||

|

||||

|

||||

|

||||

491

examples/baichuan/build.py

Normal file

491

examples/baichuan/build.py

Normal file

@@ -0,0 +1,491 @@

|

||||

# SPDX-FileCopyrightText: Copyright (c) 2022-2023 NVIDIA CORPORATION & AFFILIATES. All rights reserved.

|

||||

# SPDX-License-Identifier: Apache-2.0

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

import argparse

|

||||

import os

|

||||

import time

|

||||

|

||||

import onnx

|

||||

import torch.multiprocessing as mp

|

||||

import tvm as trt

|

||||

from onnx import TensorProto, helper

|

||||

from transformers import AutoConfig, AutoModelForCausalLM

|

||||

|

||||

import xtrt_llm

|

||||

from xtrt_llm._utils import str_dtype_to_xtrt

|

||||

from xtrt_llm.builder import Builder

|

||||

from xtrt_llm.layers.attention import PositionEmbeddingType

|

||||

from xtrt_llm.logger import logger

|

||||

from xtrt_llm.mapping import Mapping

|

||||

from xtrt_llm.models import BaichuanForCausalLM, weight_only_quantize

|

||||

from xtrt_llm.network import net_guard

|

||||

from xtrt_llm.plugin.plugin import ContextFMHAType

|

||||

from xtrt_llm.quantization import QuantMode

|

||||

|

||||

from weight import load_from_hf_baichuan # isort:skip

|

||||

|

||||

# 2 routines: get_engine_name, serialize_engine

|

||||

# are direct copy from gpt example, TODO: put in utils?

|

||||

|

||||

|

||||

def trt_dtype_to_onnx(dtype):

|

||||

if dtype == trt.float16:

|

||||

return TensorProto.DataType.FLOAT16

|

||||

elif dtype == trt.float32:

|

||||

return TensorProto.DataType.FLOAT

|

||||

elif dtype == trt.int32:

|

||||

return TensorProto.DataType.INT32

|

||||

else:

|

||||

raise TypeError("%s is not supported" % dtype)

|

||||

|

||||

|

||||

def to_onnx(network, path):

|

||||

inputs = []

|

||||

for i in range(network.num_inputs):

|

||||

network_input = network.get_input(i)

|

||||

inputs.append(

|

||||

helper.make_tensor_value_info(

|

||||

network_input.name, trt_dtype_to_onnx(network_input.dtype),

|

||||

list(network_input.shape)))

|

||||

|

||||

outputs = []

|

||||

for i in range(network.num_outputs):

|

||||

network_output = network.get_output(i)

|

||||

outputs.append(

|

||||

helper.make_tensor_value_info(

|

||||

network_output.name, trt_dtype_to_onnx(network_output.dtype),

|

||||

list(network_output.shape)))

|

||||

|

||||

nodes = []

|

||||

for i in range(network.num_layers):

|

||||

layer = network.get_layer(i)

|

||||

layer_inputs = []

|

||||

for j in range(layer.num_inputs):

|

||||

ipt = layer.get_input(j)

|

||||

if ipt is not None:

|

||||

layer_inputs.append(layer.get_input(j).name)

|

||||

layer_outputs = [

|

||||

layer.get_output(j).name for j in range(layer.num_outputs)

|

||||

]

|

||||

nodes.append(

|

||||

helper.make_node(str(layer.type),

|

||||

name=layer.name,

|

||||

inputs=layer_inputs,

|

||||

outputs=layer_outputs,

|

||||

domain="com.nvidia"))

|

||||

|

||||

onnx_model = helper.make_model(helper.make_graph(nodes,

|

||||

'attention',

|

||||

inputs,

|

||||

outputs,

|

||||

initializer=None),

|

||||

producer_name='NVIDIA')

|

||||

onnx.save(onnx_model, path)

|

||||

|

||||

|

||||

def get_engine_name(model, dtype, tp_size, rank):

|

||||

return '{}_{}_tp{}_rank{}.engine'.format(model, dtype, tp_size, rank)

|

||||

|

||||

|

||||

def serialize_engine(engine, path):

|

||||

logger.info(f'Serializing engine to {path}...')

|

||||

tik = time.time()

|

||||

# import pdb;pdb.set_trace()

|

||||

engine.serialize(path)

|

||||

tok = time.time()

|

||||

t = time.strftime('%H:%M:%S', time.gmtime(tok - tik))

|

||||

logger.info(f'Engine serialized. Total time: {t}')

|

||||

|

||||

|

||||

def parse_arguments():

|

||||

parser = argparse.ArgumentParser()

|

||||

parser.add_argument('--world_size',

|

||||

type=int,

|

||||

default=1,

|

||||

help='world size, only support tensor parallelism now')

|

||||

parser.add_argument('--model_dir',

|

||||

type=str,

|

||||

default='baichuan-inc/Baichuan-13B-Chat')

|

||||

parser.add_argument('--model_version',

|

||||

type=str,

|

||||

default='v1_13b',

|

||||

choices=['v1_7b', 'v1_13b', 'v2_7b', 'v2_13b'])

|

||||

parser.add_argument('--dtype',

|

||||

type=str,

|

||||

default='float16',

|

||||

choices=['float32', 'bfloat16', 'float16'])

|

||||

parser.add_argument(

|

||||

'--opt_memory_use',

|

||||

default=True,

|

||||

action="store_true",

|

||||

help='Whether to use Host memory optimization for building engine')

|

||||

parser.add_argument(

|

||||

'--timing_cache',

|

||||

type=str,

|

||||

default='model.cache',

|

||||

help=

|

||||

'The path of to read timing cache from, will be ignored if the file does not exist'

|

||||

)

|

||||

parser.add_argument('--log_level', type=str, default='info')

|

||||

parser.add_argument('--pp_size', type=int, default=1)

|

||||

parser.add_argument('--vocab_size', type=int, default=64000)

|

||||

parser.add_argument('--n_layer', type=int, default=40)

|

||||

parser.add_argument('--n_positions', type=int, default=4096)

|

||||

parser.add_argument('--n_embd', type=int, default=5120)

|

||||

parser.add_argument('--n_head', type=int, default=40)

|

||||

parser.add_argument('--inter_size', type=int, default=13696)

|

||||

parser.add_argument('--hidden_act', type=str, default='silu')

|

||||

parser.add_argument('--max_batch_size', type=int, default=1)

|

||||

parser.add_argument('--max_input_len', type=int, default=1024)

|

||||

parser.add_argument('--max_output_len', type=int, default=1024)

|

||||

parser.add_argument('--max_beam_width', type=int, default=1)

|

||||

parser.add_argument('--use_gpt_attention_plugin',

|

||||

nargs='?',

|

||||

const='float16',

|

||||

type=str,

|

||||

default=True,

|

||||

choices=['float16', 'bfloat16', 'float32'])

|

||||

parser.add_argument('--use_gemm_plugin',

|

||||

nargs='?',

|

||||

const='float16',

|

||||

type=str,

|

||||

default=False,

|

||||

choices=['float16', 'bfloat16', 'float32'])

|

||||

parser.add_argument('--enable_context_fmha',

|

||||

default=False,

|

||||

action='store_true')

|

||||

parser.add_argument('--enable_context_fmha_fp32_acc',

|

||||

default=False,

|

||||

action='store_true')

|

||||

parser.add_argument('--parallel_build', default=False, action='store_true')

|

||||

parser.add_argument('--visualize', default=False, action='store_true')

|

||||

parser.add_argument('--enable_debug_output',

|

||||

default=False,

|

||||

action='store_true')

|

||||

parser.add_argument('--gpus_per_node', type=int, default=8)

|

||||

parser.add_argument(

|

||||

'--output_dir',

|

||||

type=str,

|

||||

default='baichuan_outputs',

|

||||

help=

|

||||

'The path to save the serialized engine files, timing cache file and model configs'

|

||||

)

|

||||

parser.add_argument('--remove_input_padding',

|

||||

default=False,

|

||||

action='store_true')

|

||||

|

||||

parser.add_argument(

|

||||

'--use_weight_only',

|

||||

default=False,

|

||||

action="store_true",

|

||||

help='Quantize weights for the various GEMMs to INT4/INT8.'

|

||||

'See --weight_only_precision to set the precision')

|

||||

|

||||

parser.add_argument(

|

||||

'--weight_only_precision',

|

||||

const='int8',

|

||||

type=str,

|

||||

nargs='?',

|

||||

default='int8',

|

||||

choices=['int8', 'int4'],

|

||||

help=

|

||||

'Define the precision for the weights when using weight-only quantization.'

|

||||

'You must also use --use_weight_only for that argument to have an impact.'

|

||||

)

|

||||

parser.add_argument(

|

||||

'--use_inflight_batching',

|

||||

action="store_true",

|

||||

default=False,

|

||||

help="Activates inflight batching mode of gptAttentionPlugin.")

|

||||

parser.add_argument(

|

||||

'--paged_kv_cache',

|

||||

action="store_true",

|

||||

default=False,

|

||||

help=

|

||||

'By default we use contiguous KV cache. By setting this flag you enable paged KV cache'

|

||||

)

|

||||

parser.add_argument('--tokens_per_block',

|

||||

type=int,

|

||||

default=64,

|

||||

help='Number of tokens per block in paged KV cache')

|

||||

parser.add_argument(

|

||||

'--max_num_tokens',

|

||||

type=int,

|

||||

default=None,

|

||||

help='Define the max number of tokens supported by the engine')

|

||||

parser.add_argument('--gather_all_token_logits',

|

||||

action='store_true',

|

||||

default=False)

|

||||

|

||||

args = parser.parse_args()

|

||||

|

||||

if args.use_weight_only:

|

||||

args.quant_mode = QuantMode.use_weight_only(

|

||||

args.weight_only_precision == 'int4')

|

||||

else:

|

||||

args.quant_mode = QuantMode(0)

|

||||

|

||||

if args.use_inflight_batching:

|

||||

if not args.use_gpt_attention_plugin:

|

||||

args.use_gpt_attention_plugin = 'float16'

|

||||

logger.info(

|

||||

f"Using GPT attention plugin for inflight batching mode. Setting to default '{args.use_gpt_attention_plugin}'"

|

||||

)

|

||||

if not args.remove_input_padding:

|

||||

args.remove_input_padding = True

|

||||

logger.info(

|

||||

"Using remove input padding for inflight batching mode.")

|

||||

if not args.paged_kv_cache:

|

||||

args.paged_kv_cache = True

|

||||

logger.info("Using paged KV cache for inflight batching mode.")

|

||||

|

||||

if args.max_num_tokens is not None:

|

||||

assert args.enable_context_fmha

|

||||

|

||||

if args.model_dir is not None:

|

||||

hf_config = AutoConfig.from_pretrained(args.model_dir,

|

||||

trust_remote_code=True)

|

||||

# override the inter_size for Baichuan

|

||||

args.inter_size = hf_config.intermediate_size

|

||||

args.n_embd = hf_config.hidden_size

|

||||

args.n_head = hf_config.num_attention_heads

|

||||

args.n_layer = hf_config.num_hidden_layers

|

||||

if args.model_version == 'v1_7b' or args.model_version == 'v2_7b':

|

||||

args.n_positions = hf_config.max_position_embeddings

|

||||

else:

|

||||

args.n_positions = hf_config.model_max_length

|

||||

args.vocab_size = hf_config.vocab_size

|

||||

args.hidden_act = hf_config.hidden_act

|

||||

else:

|

||||

# default values are based on v1_13b, change them based on model_version

|

||||

if args.model_version == 'v1_7b':

|

||||

args.inter_size = 11008

|

||||

args.n_embd = 4096

|

||||

args.n_head = 32

|

||||

args.n_layer = 32

|

||||

args.n_positions = 4096

|

||||

args.vocab_size = 64000

|

||||

args.hidden_act = 'silu'

|

||||

elif args.model_version == 'v2_7b':

|

||||

args.inter_size = 11008

|

||||

args.n_embd = 4096

|

||||

args.n_head = 32

|

||||

args.n_layer = 32

|

||||

args.n_positions = 4096

|

||||

args.vocab_size = 125696

|

||||

args.hidden_act = 'silu'

|

||||

elif args.model_version == 'v2_13b':

|

||||

args.inter_size = 13696

|

||||

args.n_embd = 5120

|

||||

args.n_head = 40

|

||||

args.n_layer = 40

|

||||

args.n_positions = 4096

|

||||

args.vocab_size = 125696

|

||||

args.hidden_act = 'silu'

|

||||

|

||||

if args.dtype == 'bfloat16':

|

||||

assert args.use_gemm_plugin, "Please use gemm plugin when dtype is bfloat16"

|

||||

|

||||

return args

|

||||

|

||||

|

||||

def build_rank_engine(builder: Builder,

|

||||

builder_config: xtrt_llm.builder.BuilderConfig,

|

||||

engine_name, rank, args):

|

||||

'''

|

||||

@brief: Build the engine on the given rank.

|

||||

@param rank: The rank to build the engine.

|

||||

@param args: The cmd line arguments.

|

||||

@return: The built engine.

|

||||

'''

|

||||

kv_dtype = str_dtype_to_xtrt(args.dtype)

|

||||

if args.model_version == 'v1_7b' or args.model_version == 'v2_7b':

|

||||

position_embedding_type = PositionEmbeddingType.rope_gpt_neox

|

||||

else:

|

||||

position_embedding_type = PositionEmbeddingType.alibi

|

||||

|

||||

# Initialize Module

|

||||

xtrt_llm_baichuan = BaichuanForCausalLM(

|

||||

num_layers=args.n_layer,

|

||||

num_heads=args.n_head,

|

||||

hidden_size=args.n_embd,

|

||||

vocab_size=args.vocab_size,

|

||||

hidden_act=args.hidden_act,

|

||||

max_position_embeddings=args.n_positions,

|

||||

position_embedding_type=position_embedding_type,

|

||||

dtype=kv_dtype,

|

||||

mlp_hidden_size=args.inter_size,

|

||||

mapping=Mapping(world_size=args.world_size,

|

||||

rank=rank,

|

||||

tp_size=args.world_size),

|

||||

gather_all_token_logits=args.gather_all_token_logits)

|

||||

if args.use_weight_only and args.weight_only_precision == 'int8' and 0:

|

||||

xtrt_llm_baichuan = weight_only_quantize(xtrt_llm_baichuan,

|

||||

QuantMode.use_weight_only())

|

||||

elif args.use_weight_only and args.weight_only_precision == 'int4' and 0:

|

||||

xtrt_llm_baichuan = weight_only_quantize(

|

||||

xtrt_llm_baichuan, QuantMode.use_weight_only(use_int4_weights=True))

|

||||

if args.model_dir is not None:

|

||||

logger.info(

|

||||

f'Loading HF Baichuan {args.model_version} ... from {args.model_dir}'

|

||||

)

|

||||

tik = time.time()

|

||||

hf_baichuan = AutoModelForCausalLM.from_pretrained(

|

||||

args.model_dir,

|

||||

device_map={

|

||||

"model": "cpu",

|

||||

"lm_head": "cpu"

|

||||

}, # Load to CPU memory

|

||||

torch_dtype="auto",

|

||||

trust_remote_code=True)

|

||||

tok = time.time()

|

||||

t = time.strftime('%H:%M:%S', time.gmtime(tok - tik))

|

||||

logger.info(f'HF Baichuan {args.model_version} loaded. Total time: {t}')

|

||||

load_from_hf_baichuan(xtrt_llm_baichuan,

|

||||

hf_baichuan,

|

||||

args.model_version,

|

||||

rank,

|

||||

args.world_size,

|

||||

dtype=args.dtype)

|

||||

del hf_baichuan

|

||||

|

||||

# Module -> Network

|

||||

network = builder.create_network()

|

||||

network.trt_network.name = engine_name

|

||||

if args.use_gpt_attention_plugin:

|

||||

network.plugin_config.set_gpt_attention_plugin(

|

||||

dtype=args.use_gpt_attention_plugin)

|

||||

if args.use_gemm_plugin:

|

||||

network.plugin_config.set_gemm_plugin(dtype=args.use_gemm_plugin)

|

||||

assert not (args.enable_context_fmha and args.enable_context_fmha_fp32_acc)

|

||||

if args.enable_context_fmha:

|

||||

network.plugin_config.set_context_fmha(ContextFMHAType.enabled)

|

||||

if args.enable_context_fmha_fp32_acc:

|

||||

network.plugin_config.set_context_fmha(

|

||||

ContextFMHAType.enabled_with_fp32_acc)

|

||||

if args.use_weight_only:

|

||||

network.plugin_config.set_weight_only_quant_matmul_plugin(

|

||||

dtype='float16')

|

||||

builder_config.trt_builder_config.use_weight_only = args.weight_only_precision

|

||||

if args.world_size > 1:

|

||||

network.plugin_config.set_nccl_plugin(args.dtype)

|

||||

if args.remove_input_padding:

|

||||

network.plugin_config.enable_remove_input_padding()

|

||||

if args.paged_kv_cache:

|

||||

network.plugin_config.enable_paged_kv_cache(args.tokens_per_block)

|

||||

|

||||

with net_guard(network):

|

||||

# Prepare

|

||||

network.set_named_parameters(xtrt_llm_baichuan.named_parameters())

|

||||

|

||||

# Forward

|

||||

inputs = xtrt_llm_baichuan.prepare_inputs(args.max_batch_size,

|

||||

args.max_input_len,

|

||||

args.max_output_len, True,

|

||||

args.max_beam_width,

|

||||

args.max_num_tokens)

|

||||

xtrt_llm_baichuan(*inputs)

|

||||

if args.enable_debug_output:

|

||||

# mark intermediate nodes' outputs

|

||||

for k, v in xtrt_llm_baichuan.named_network_outputs():

|

||||

v = v.trt_tensor

|

||||

v.name = k

|

||||

network.trt_network.mark_output(v)

|

||||

v.dtype = kv_dtype

|

||||

if args.visualize:

|

||||

model_path = os.path.join(args.output_dir, 'test.onnx')

|

||||

to_onnx(network.trt_network, model_path)

|

||||

|

||||

engine = None

|

||||

|

||||

# Network -> Engine

|

||||

engine = builder.build_engine(network, builder_config, compiler="gr")

|

||||

if rank == 0:

|

||||

config_path = os.path.join(args.output_dir, 'config.json')

|

||||

builder.save_config(builder_config, config_path)

|

||||

if args.opt_memory_use:

|

||||

return engine, network

|

||||

return engine

|

||||

|

||||

|

||||

def build(rank, args):

|

||||

# torch.cuda.set_device(rank % args.gpus_per_node)

|

||||

xtrt_llm.logger.set_level(args.log_level)

|

||||

if not os.path.exists(args.output_dir):

|

||||

os.makedirs(args.output_dir)

|

||||

|

||||

# when doing serializing build, all ranks share one engine

|

||||

builder = Builder()

|

||||

|

||||

cache = None

|

||||

model_name = 'baichuan'

|

||||

for cur_rank in range(args.world_size):

|

||||

# skip other ranks if parallel_build is enabled

|

||||

if args.parallel_build and cur_rank != rank:

|

||||

continue

|

||||

builder_config = builder.create_builder_config(

|

||||

name=model_name,

|

||||

precision=args.dtype,

|

||||

timing_cache=args.timing_cache if cache is None else cache,

|

||||

tensor_parallel=args.world_size, # TP only

|

||||

parallel_build=args.parallel_build,

|

||||

pipeline_parallel=args.pp_size,

|

||||

num_layers=args.n_layer,

|

||||

num_heads=args.n_head,

|

||||

hidden_size=args.n_embd,

|

||||

inter_size=args.inter_size,

|

||||

vocab_size=args.vocab_size,

|

||||

hidden_act=args.hidden_act,

|

||||

max_position_embeddings=args.n_positions,

|

||||

max_batch_size=args.max_batch_size,

|

||||

max_input_len=args.max_input_len,

|

||||

max_output_len=args.max_output_len,

|

||||

max_num_tokens=args.max_num_tokens,

|

||||

int8=args.quant_mode.has_act_and_weight_quant(),

|

||||

quant_mode=args.quant_mode,

|

||||

fusion_pattern_list=["remove_dup_mask"],

|

||||

gather_all_token_logits=args.gather_all_token_logits,

|

||||

)

|

||||

guard = xtrt_llm.fusion_patterns.FuseonPatternGuard()

|

||||

print(guard)

|

||||

engine_name = get_engine_name(model_name, args.dtype, args.world_size,

|

||||

cur_rank)

|

||||

if args.opt_memory_use:

|

||||

engine, network = build_rank_engine(builder, builder_config,

|

||||

engine_name, cur_rank, args)

|

||||

else:

|

||||

engine = build_rank_engine(builder, builder_config, engine_name,

|

||||

cur_rank, args)

|

||||

assert engine is not None, f'Failed to build engine for rank {cur_rank}'

|

||||

|

||||

serialize_engine(engine, os.path.join(args.output_dir, engine_name))

|

||||

|

||||

|

||||

if __name__ == '__main__':

|

||||

args = parse_arguments()

|

||||

logger.set_level(args.log_level)

|

||||

tik = time.time()

|

||||

if args.parallel_build and args.world_size > 1:

|

||||

logger.warning(

|

||||

f'Parallelly build TensorRT engines. Please make sure that all of the {args.world_size} GPUs are totally free.'

|

||||

)

|

||||

mp.spawn(build, nprocs=args.world_size, args=(args, ))

|

||||

else:

|

||||

args.parallel_build = False

|

||||

logger.info('Serially build TensorRT engines.')

|

||||

build(0, args)

|

||||

|

||||

tok = time.time()

|

||||

t = time.strftime('%H:%M:%S', time.gmtime(tok - tik))

|

||||

logger.info(f'Total time of building all {args.world_size} engines: {t}')

|

||||

99

examples/baichuan/build.sh

Executable file

99

examples/baichuan/build.sh

Executable file

@@ -0,0 +1,99 @@

|

||||

build_baichuan() {

|

||||

get_path

|

||||

|

||||

cmd="XTCL_BUILD_DEBUG=1 python3 build.py ${tp_cmd} --model_version $model_name \

|

||||

--model_dir ${model_home}/downloads/baichuan${model_version_num}-${model_size} \

|

||||

--dtype float16 ${int8_cmd} \

|

||||

--use_gemm_plugin float16 \

|

||||

--use_gpt_attention_plugin float16 \

|

||||

--output_dir ${model_home}/engine/baichuan${model_version_num}-${model_size}/${precision}/${tp}"

|

||||

echo "******************** cmd *********************"

|

||||

echo $cmd

|

||||

eval ${cmd} |& tee ${log_file}

|

||||

}

|

||||

|

||||

get_path(){

|

||||

model_home=/home/workspace

|

||||

model_version=$(echo $model_name | cut -d "_" -f 1)

|

||||

model_size=$(echo $model_name | cut -d "_" -f 2)

|

||||

precision=$(echo $model_name | cut -d "_" -f 3)

|

||||

tp=$(echo $model_name | cut -d "_" -f 4)

|

||||

model_name=${model_version}_${model_size}

|

||||

model_version_num=$(echo $model_version | grep -o '[0-9]\+')

|

||||

|

||||

if [[ "$model_version_num" == "1" ]]; then

|

||||

model_version_num=""

|

||||

fi

|

||||

|

||||

mpi_num=$(echo $tp | cut -d "p" -f 2)

|

||||

if (( $mpi_num > 1 )); then

|

||||

mpi_cmd="mpirun --allow-run-as-root -n $mpi_num"

|

||||

tp_cmd="--parallel_build --world_size $mpi_num"

|

||||

else

|

||||

mpi_cmd=""

|

||||

tp_cmd=""

|

||||

fi

|

||||

|

||||

if [[ "$precision" == "int8" ]]; then

|

||||

int8_cmd="--use_weight_only"

|

||||

else

|

||||

int8_cmd=""

|

||||

fi

|

||||

|

||||

echo "------------------------------------------------------"

|

||||

log_file=./logs/relay_${model_name}_"$(date '+%Y-%m-%d-%H:%M:%S')".log

|

||||

echo "log file -> ${log_file} "

|

||||

|

||||

echo -e "\033[1;31m" # 设置红色字体

|

||||

echo "Model version Model size Precision TP"

|

||||

echo -e "\033[0m" # 重置字体颜色

|

||||

echo "------------------------------------------------------"

|

||||

echo -e "\033[0;32m"

|

||||

echo "$model_version" " " "$model_size" " " "$precision" " " "$tp"

|

||||

echo ""

|

||||

}

|

||||

|

||||

|

||||

if [ "$#" -ne 1 ]; then

|

||||

echo "Usage: $0 -m=<model_name>"

|

||||

exit 1

|

||||

fi

|

||||

|

||||

model_name="$1"

|

||||

|

||||

case $model_name in

|

||||

"v1_13b_fp16_tp1")

|

||||

build_baichuan

|

||||

;;

|

||||

"v1_13b_int8_tp1")

|

||||

build_baichuan

|

||||

;;

|

||||

"v1_13b_fp16_tp2")

|

||||

build_baichuan

|

||||

;;

|

||||

"v1_13b_int8_tp2")

|

||||

build_baichuan

|

||||

;;

|

||||

"v1_7b_fp16_tp1")

|

||||

build_baichuan

|