初始化项目,由ModelHub XC社区提供模型

Model: unsloth/Qwen2.5-Math-7B-Instruct Source: Original Platform

This commit is contained in:

35

.gitattributes

vendored

Normal file

35

.gitattributes

vendored

Normal file

@@ -0,0 +1,35 @@

|

||||

*.7z filter=lfs diff=lfs merge=lfs -text

|

||||

*.arrow filter=lfs diff=lfs merge=lfs -text

|

||||

*.bin filter=lfs diff=lfs merge=lfs -text

|

||||

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

||||

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

||||

*.ftz filter=lfs diff=lfs merge=lfs -text

|

||||

*.gz filter=lfs diff=lfs merge=lfs -text

|

||||

*.h5 filter=lfs diff=lfs merge=lfs -text

|

||||

*.joblib filter=lfs diff=lfs merge=lfs -text

|

||||

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

||||

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

||||

*.model filter=lfs diff=lfs merge=lfs -text

|

||||

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

||||

*.npy filter=lfs diff=lfs merge=lfs -text

|

||||

*.npz filter=lfs diff=lfs merge=lfs -text

|

||||

*.onnx filter=lfs diff=lfs merge=lfs -text

|

||||

*.ot filter=lfs diff=lfs merge=lfs -text

|

||||

*.parquet filter=lfs diff=lfs merge=lfs -text

|

||||

*.pb filter=lfs diff=lfs merge=lfs -text

|

||||

*.pickle filter=lfs diff=lfs merge=lfs -text

|

||||

*.pkl filter=lfs diff=lfs merge=lfs -text

|

||||

*.pt filter=lfs diff=lfs merge=lfs -text

|

||||

*.pth filter=lfs diff=lfs merge=lfs -text

|

||||

*.rar filter=lfs diff=lfs merge=lfs -text

|

||||

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

||||

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

||||

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

||||

*.tar filter=lfs diff=lfs merge=lfs -text

|

||||

*.tflite filter=lfs diff=lfs merge=lfs -text

|

||||

*.tgz filter=lfs diff=lfs merge=lfs -text

|

||||

*.wasm filter=lfs diff=lfs merge=lfs -text

|

||||

*.xz filter=lfs diff=lfs merge=lfs -text

|

||||

*.zip filter=lfs diff=lfs merge=lfs -text

|

||||

*.zst filter=lfs diff=lfs merge=lfs -text

|

||||

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

||||

144

README.md

Normal file

144

README.md

Normal file

@@ -0,0 +1,144 @@

|

||||

---

|

||||

base_model: Qwen/Qwen2.5-Math-7B-Instruct

|

||||

language:

|

||||

- en

|

||||

library_name: transformers

|

||||

license: apache-2.0

|

||||

tags:

|

||||

- unsloth

|

||||

- transformers

|

||||

---

|

||||

|

||||

# Finetune Llama 3.1, Gemma 2, Mistral 2-5x faster with 70% less memory via Unsloth!

|

||||

|

||||

We have a Qwen 2.5 (all model sizes) [free Google Colab Tesla T4 notebook](https://colab.research.google.com/drive/1Kose-ucXO1IBaZq5BvbwWieuubP7hxvQ?usp=sharing).

|

||||

Also a [Qwen 2.5 conversational style notebook](https://colab.research.google.com/drive/1qN1CEalC70EO1wGKhNxs1go1W9So61R5?usp=sharing).

|

||||

|

||||

[<img src="https://raw.githubusercontent.com/unslothai/unsloth/main/images/Discord%20button.png" width="200"/>](https://discord.gg/unsloth)

|

||||

[<img src="https://raw.githubusercontent.com/unslothai/unsloth/main/images/unsloth%20made%20with%20love.png" width="200"/>](https://github.com/unslothai/unsloth)

|

||||

|

||||

## ✨ Finetune for Free

|

||||

|

||||

All notebooks are **beginner friendly**! Add your dataset, click "Run All", and you'll get a 2x faster finetuned model which can be exported to GGUF, vLLM or uploaded to Hugging Face.

|

||||

|

||||

| Unsloth supports | Free Notebooks | Performance | Memory use |

|

||||

|-----------------|--------------------------------------------------------------------------------------------------------------------------|-------------|----------|

|

||||

| **Llama-3.1 8b** | [▶️ Start on Colab](https://colab.research.google.com/drive/1Ys44kVvmeZtnICzWz0xgpRnrIOjZAuxp?usp=sharing) | 2.4x faster | 58% less |

|

||||

| **Phi-3.5 (mini)** | [▶️ Start on Colab](https://colab.research.google.com/drive/1lN6hPQveB_mHSnTOYifygFcrO8C1bxq4?usp=sharing) | 2x faster | 50% less |

|

||||

| **Gemma-2 9b** | [▶️ Start on Colab](https://colab.research.google.com/drive/1vIrqH5uYDQwsJ4-OO3DErvuv4pBgVwk4?usp=sharing) | 2.4x faster | 58% less |

|

||||

| **Mistral 7b** | [▶️ Start on Colab](https://colab.research.google.com/drive/1Dyauq4kTZoLewQ1cApceUQVNcnnNTzg_?usp=sharing) | 2.2x faster | 62% less |

|

||||

| **TinyLlama** | [▶️ Start on Colab](https://colab.research.google.com/drive/1AZghoNBQaMDgWJpi4RbffGM1h6raLUj9?usp=sharing) | 3.9x faster | 74% less |

|

||||

| **DPO - Zephyr** | [▶️ Start on Colab](https://colab.research.google.com/drive/15vttTpzzVXv_tJwEk-hIcQ0S9FcEWvwP?usp=sharing) | 1.9x faster | 19% less |

|

||||

|

||||

- This [conversational notebook](https://colab.research.google.com/drive/1Aau3lgPzeZKQ-98h69CCu1UJcvIBLmy2?usp=sharing) is useful for ShareGPT ChatML / Vicuna templates.

|

||||

- This [text completion notebook](https://colab.research.google.com/drive/1ef-tab5bhkvWmBOObepl1WgJvfvSzn5Q?usp=sharing) is for raw text. This [DPO notebook](https://colab.research.google.com/drive/15vttTpzzVXv_tJwEk-hIcQ0S9FcEWvwP?usp=sharing) replicates Zephyr.

|

||||

- \* Kaggle has 2x T4s, but we use 1. Due to overhead, 1x T4 is 5x faster.

|

||||

|

||||

|

||||

# Qwen2.5-Math-7B-Instruct

|

||||

|

||||

> [!Warning]

|

||||

> <div align="center">

|

||||

> <b>

|

||||

> 🚨 Qwen2.5-Math mainly supports solving English and Chinese math problems through CoT and TIR. We do not recommend using this series of models for other tasks.

|

||||

> </b>

|

||||

> </div>

|

||||

|

||||

## Introduction

|

||||

|

||||

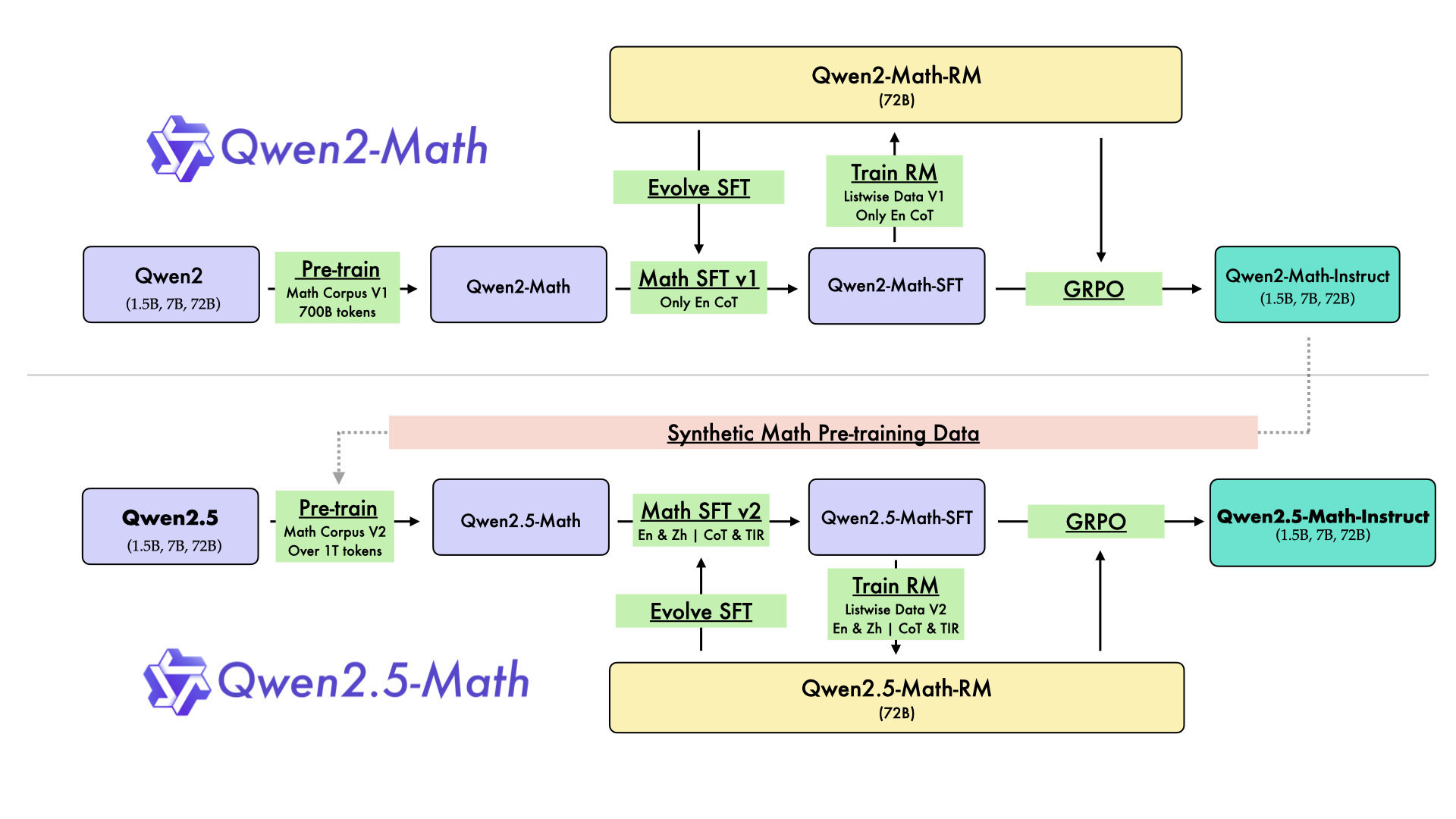

In August 2024, we released the first series of mathematical LLMs - [Qwen2-Math](https://qwenlm.github.io/blog/qwen2-math/) - of our Qwen family. A month later, we have upgraded it and open-sourced **Qwen2.5-Math** series, including base models **Qwen2.5-Math-1.5B/7B/72B**, instruction-tuned models **Qwen2.5-Math-1.5B/7B/72B-Instruct**, and mathematical reward model **Qwen2.5-Math-RM-72B**.

|

||||

|

||||

Unlike Qwen2-Math series which only supports using Chain-of-Thught (CoT) to solve English math problems, Qwen2.5-Math series is expanded to support using both CoT and Tool-integrated Reasoning (TIR) to solve math problems in both Chinese and English. The Qwen2.5-Math series models have achieved significant performance improvements compared to the Qwen2-Math series models on the Chinese and English mathematics benchmarks with CoT.

|

||||

|

||||

|

||||

|

||||

While CoT plays a vital role in enhancing the reasoning capabilities of LLMs, it faces challenges in achieving computational accuracy and handling complex mathematical or algorithmic reasoning tasks, such as finding the roots of a quadratic equation or computing the eigenvalues of a matrix. TIR can further improve the model's proficiency in precise computation, symbolic manipulation, and algorithmic manipulation. Qwen2.5-Math-1.5B/7B/72B-Instruct achieve 79.7, 85.3, and 87.8 respectively on the MATH benchmark using TIR.

|

||||

|

||||

## Model Details

|

||||

|

||||

|

||||

For more details, please refer to our [blog post](https://qwenlm.github.io/blog/qwen2.5-math/) and [GitHub repo](https://github.com/QwenLM/Qwen2.5-Math).

|

||||

|

||||

|

||||

## Requirements

|

||||

* `transformers>=4.37.0` for Qwen2.5-Math models. The latest version is recommended.

|

||||

|

||||

> [!Warning]

|

||||

> <div align="center">

|

||||

> <b>

|

||||

> 🚨 This is a must because <code>transformers</code> integrated Qwen2 codes since <code>4.37.0</code>.

|

||||

> </b>

|

||||

> </div>

|

||||

|

||||

For requirements on GPU memory and the respective throughput, see similar results of Qwen2 [here](https://qwen.readthedocs.io/en/latest/benchmark/speed_benchmark.html).

|

||||

|

||||

## Quick Start

|

||||

|

||||

> [!Important]

|

||||

>

|

||||

> **Qwen2.5-Math-7B-Instruct** is an instruction model for chatting;

|

||||

>

|

||||

> **Qwen2.5-Math-7B** is a base model typically used for completion and few-shot inference, serving as a better starting point for fine-tuning.

|

||||

>

|

||||

|

||||

### 🤗 Hugging Face Transformers

|

||||

|

||||

Qwen2.5-Math can be deployed and infered in the same way as [Qwen2.5](https://github.com/QwenLM/Qwen2.5). Here we show a code snippet to show you how to use the chat model with `transformers`:

|

||||

|

||||

```python

|

||||

from transformers import AutoModelForCausalLM, AutoTokenizer

|

||||

|

||||

model_name = "Qwen/Qwen2.5-Math-7B-Instruct"

|

||||

device = "cuda" # the device to load the model onto

|

||||

|

||||

model = AutoModelForCausalLM.from_pretrained(

|

||||

model_name,

|

||||

torch_dtype="auto",

|

||||

device_map="auto"

|

||||

)

|

||||

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

||||

|

||||

prompt = "Find the value of $x$ that satisfies the equation $4x+5 = 6x+7$."

|

||||

|

||||

# CoT

|

||||

messages = [

|

||||

{"role": "system", "content": "Please reason step by step, and put your final answer within \\boxed{}."},

|

||||

{"role": "user", "content": prompt}

|

||||

]

|

||||

|

||||

# TIR

|

||||

messages = [

|

||||

{"role": "system", "content": "Please integrate natural language reasoning with programs to solve the problem above, and put your final answer within \\boxed{}."},

|

||||

{"role": "user", "content": prompt}

|

||||

]

|

||||

|

||||

text = tokenizer.apply_chat_template(

|

||||

messages,

|

||||

tokenize=False,

|

||||

add_generation_prompt=True

|

||||

)

|

||||

model_inputs = tokenizer([text], return_tensors="pt").to(device)

|

||||

|

||||

generated_ids = model.generate(

|

||||

**model_inputs,

|

||||

max_new_tokens=512

|

||||

)

|

||||

generated_ids = [

|

||||

output_ids[len(input_ids):] for input_ids, output_ids in zip(model_inputs.input_ids, generated_ids)

|

||||

]

|

||||

|

||||

response = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

|

||||

```

|

||||

|

||||

## Citation

|

||||

|

||||

If you find our work helpful, feel free to give us a citation.

|

||||

|

||||

```

|

||||

@article{yang2024qwen25mathtechnicalreportmathematical,

|

||||

title={Qwen2.5-Math Technical Report: Toward Mathematical Expert Model via Self-Improvement},

|

||||

author={An Yang and Beichen Zhang and Binyuan Hui and Bofei Gao and Bowen Yu and Chengpeng Li and Dayiheng Liu and Jianhong Tu and Jingren Zhou and Junyang Lin and Keming Lu and Mingfeng Xue and Runji Lin and Tianyu Liu and Xingzhang Ren and Zhenru Zhang},

|

||||

journal={arXiv preprint arXiv:2409.12122},

|

||||

year={2024}

|

||||

}

|

||||

```

|

||||

25

added_tokens.json

Normal file

25

added_tokens.json

Normal file

@@ -0,0 +1,25 @@

|

||||

{

|

||||

"</tool_call>": 151658,

|

||||

"<tool_call>": 151657,

|

||||

"<|PAD_TOKEN|>": 151665,

|

||||

"<|box_end|>": 151649,

|

||||

"<|box_start|>": 151648,

|

||||

"<|endoftext|>": 151643,

|

||||

"<|file_sep|>": 151664,

|

||||

"<|fim_middle|>": 151660,

|

||||

"<|fim_pad|>": 151662,

|

||||

"<|fim_prefix|>": 151659,

|

||||

"<|fim_suffix|>": 151661,

|

||||

"<|im_end|>": 151645,

|

||||

"<|im_start|>": 151644,

|

||||

"<|image_pad|>": 151655,

|

||||

"<|object_ref_end|>": 151647,

|

||||

"<|object_ref_start|>": 151646,

|

||||

"<|quad_end|>": 151651,

|

||||

"<|quad_start|>": 151650,

|

||||

"<|repo_name|>": 151663,

|

||||

"<|video_pad|>": 151656,

|

||||

"<|vision_end|>": 151653,

|

||||

"<|vision_pad|>": 151654,

|

||||

"<|vision_start|>": 151652

|

||||

}

|

||||

30

config.json

Normal file

30

config.json

Normal file

@@ -0,0 +1,30 @@

|

||||

{

|

||||

"_name_or_path": "Qwen/Qwen2.5-Math-7B-Instruct",

|

||||

"architectures": [

|

||||

"Qwen2ForCausalLM"

|

||||

],

|

||||

"attention_dropout": 0.0,

|

||||

"bos_token_id": 151643,

|

||||

"eos_token_id": 151645,

|

||||

"hidden_act": "silu",

|

||||

"hidden_size": 3584,

|

||||

"initializer_range": 0.02,

|

||||

"intermediate_size": 18944,

|

||||

"max_position_embeddings": 4096,

|

||||

"max_window_layers": 28,

|

||||

"model_type": "qwen2",

|

||||

"num_attention_heads": 28,

|

||||

"num_hidden_layers": 28,

|

||||

"num_key_value_heads": 4,

|

||||

"pad_token_id": 151665,

|

||||

"rms_norm_eps": 1e-06,

|

||||

"rope_theta": 10000.0,

|

||||

"sliding_window": null,

|

||||

"tie_word_embeddings": false,

|

||||

"torch_dtype": "bfloat16",

|

||||

"transformers_version": "4.44.2",

|

||||

"unsloth_fixed": true,

|

||||

"use_cache": true,

|

||||

"use_sliding_window": false,

|

||||

"vocab_size": 152064

|

||||

}

|

||||

1

configuration.json

Normal file

1

configuration.json

Normal file

@@ -0,0 +1 @@

|

||||

{"framework": "pytorch", "task": "text-generation", "allow_remote": true}

|

||||

10

generation_config.json

Normal file

10

generation_config.json

Normal file

@@ -0,0 +1,10 @@

|

||||

{

|

||||

"bos_token_id": 151643,

|

||||

"eos_token_id": [

|

||||

151645,

|

||||

151643

|

||||

],

|

||||

"max_length": 4096,

|

||||

"pad_token_id": 151665,

|

||||

"transformers_version": "4.44.2"

|

||||

}

|

||||

151388

merges.txt

Normal file

151388

merges.txt

Normal file

File diff suppressed because it is too large

Load Diff

3

model-00001-of-00004.safetensors

Normal file

3

model-00001-of-00004.safetensors

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:3786a6f63ce74a8abe0681d5c8aa42e6d993bf0ca3b2d0456d9ee77f0ed6a15a

|

||||

size 3945426872

|

||||

3

model-00002-of-00004.safetensors

Normal file

3

model-00002-of-00004.safetensors

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:dc46d5d5f0854b6134eb15a1eaf1259d77581e53da130fb3caf21136d43ce1b3

|

||||

size 3864726352

|

||||

3

model-00003-of-00004.safetensors

Normal file

3

model-00003-of-00004.safetensors

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:d5a9568b23bf8fc72b42108b1f83e7dfa0ca1bfecc3c66a455ae6844718c8679

|

||||

size 3864726408

|

||||

3

model-00004-of-00004.safetensors

Normal file

3

model-00004-of-00004.safetensors

Normal file

@@ -0,0 +1,3 @@

|

||||

version https://git-lfs.github.com/spec/v1

|

||||

oid sha256:26de7d22f00757b22baacee45f32f78b33f1d2f2ea4f3b951c7f2b2eb1801b6a

|

||||

size 3556392240

|

||||

346

model.safetensors.index.json

Normal file

346

model.safetensors.index.json

Normal file

@@ -0,0 +1,346 @@

|

||||

{

|

||||

"metadata": {

|

||||

"total_size": 15231233024

|

||||

},

|

||||

"weight_map": {

|

||||

"lm_head.weight": "model-00004-of-00004.safetensors",

|

||||

"model.embed_tokens.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.10.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.14.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.14.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.14.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.15.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.2.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.20.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.22.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.22.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.23.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.k_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.o_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.q_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.q_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.v_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.k_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.o_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.q_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.q_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.v_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.k_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.o_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.q_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.q_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.v_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.k_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.o_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.q_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.q_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.v_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.k_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.o_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.q_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.q_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.v_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.3.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.6.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.6.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.6.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.6.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.7.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.norm.weight": "model-00004-of-00004.safetensors"

|

||||

}

|

||||

}

|

||||

31

special_tokens_map.json

Normal file

31

special_tokens_map.json

Normal file

@@ -0,0 +1,31 @@

|

||||

{

|

||||

"additional_special_tokens": [

|

||||

"<|im_start|>",

|

||||

"<|im_end|>",

|

||||

"<|object_ref_start|>",

|

||||

"<|object_ref_end|>",

|

||||

"<|box_start|>",

|

||||

"<|box_end|>",

|

||||

"<|quad_start|>",

|

||||

"<|quad_end|>",

|

||||

"<|vision_start|>",

|

||||

"<|vision_end|>",

|

||||

"<|vision_pad|>",

|

||||

"<|image_pad|>",

|

||||

"<|video_pad|>"

|

||||

],

|

||||

"eos_token": {

|

||||

"content": "<|im_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false

|

||||

},

|

||||

"pad_token": {

|

||||

"content": "<|PAD_TOKEN|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false

|

||||

}

|

||||

}

|

||||

303292

tokenizer.json

Normal file

303292

tokenizer.json

Normal file

File diff suppressed because it is too large

Load Diff

216

tokenizer_config.json

Normal file

216

tokenizer_config.json

Normal file

@@ -0,0 +1,216 @@

|

||||

{

|

||||

"add_bos_token": false,

|

||||

"add_prefix_space": false,

|

||||

"added_tokens_decoder": {

|

||||

"151643": {

|

||||

"content": "<|endoftext|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151644": {

|

||||

"content": "<|im_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151645": {

|

||||

"content": "<|im_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151646": {

|

||||

"content": "<|object_ref_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151647": {

|

||||

"content": "<|object_ref_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151648": {

|

||||

"content": "<|box_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151649": {

|

||||

"content": "<|box_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151650": {

|

||||

"content": "<|quad_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151651": {

|

||||

"content": "<|quad_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151652": {

|

||||

"content": "<|vision_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151653": {

|

||||

"content": "<|vision_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151654": {

|

||||

"content": "<|vision_pad|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151655": {

|

||||

"content": "<|image_pad|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151656": {

|

||||

"content": "<|video_pad|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151657": {

|

||||

"content": "<tool_call>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151658": {

|

||||

"content": "</tool_call>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151659": {

|

||||

"content": "<|fim_prefix|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151660": {

|

||||

"content": "<|fim_middle|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151661": {

|

||||

"content": "<|fim_suffix|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151662": {

|

||||

"content": "<|fim_pad|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151663": {

|

||||

"content": "<|repo_name|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151664": {

|

||||

"content": "<|file_sep|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151665": {

|

||||

"content": "<|PAD_TOKEN|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

}

|

||||

},

|

||||

"additional_special_tokens": [

|

||||

"<|im_start|>",

|

||||

"<|im_end|>",

|

||||

"<|object_ref_start|>",

|

||||

"<|object_ref_end|>",

|

||||

"<|box_start|>",

|

||||

"<|box_end|>",

|

||||

"<|quad_start|>",

|

||||

"<|quad_end|>",

|

||||

"<|vision_start|>",

|

||||

"<|vision_end|>",

|

||||

"<|vision_pad|>",

|

||||

"<|image_pad|>",

|

||||

"<|video_pad|>"

|

||||

],

|

||||

"bos_token": null,

|

||||

"chat_template": "{%- if tools %}\n {{- '<|im_start|>system\\n' }}\n {%- if messages[0]['role'] == 'system' %}\n {{- messages[0]['content'] }}\n {%- else %}\n {{- 'Please reason step by step, and put your final answer within \\\\boxed{}.' }}\n {%- endif %}\n {{- \"\\n\\n# Tools\\n\\nYou may call one or more functions to assist with the user query.\\n\\nYou are provided with function signatures within <tools></tools> XML tags:\\n<tools>\" }}\n {%- for tool in tools %}\n {{- \"\\n\" }}\n {{- tool | tojson }}\n {%- endfor %}\n {{- \"\\n</tools>\\n\\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\\n<tool_call>\\n{\\\"name\\\": <function-name>, \\\"arguments\\\": <args-json-object>}\\n</tool_call><|im_end|>\\n\" }}\n{%- else %}\n {%- if messages[0]['role'] == 'system' %}\n {{- '<|im_start|>system\\n' + messages[0]['content'] + '<|im_end|>\\n' }}\n {%- else %}\n {{- '<|im_start|>system\\nPlease reason step by step, and put your final answer within \\\\boxed{}.<|im_end|>\\n' }}\n {%- endif %}\n{%- endif %}\n{%- for message in messages %}\n {%- if (message.role == \"user\") or (message.role == \"system\" and not loop.first) or (message.role == \"assistant\" and not message.tool_calls) %}\n {{- '<|im_start|>' + message.role + '\\n' + message.content + '<|im_end|>' + '\\n' }}\n {%- elif message.role == \"assistant\" %}\n {{- '<|im_start|>' + message.role }}\n {%- if message.content %}\n {{- '\\n' + message.content }}\n {%- endif %}\n {%- for tool_call in message.tool_calls %}\n {%- if tool_call.function is defined %}\n {%- set tool_call = tool_call.function %}\n {%- endif %}\n {{- '\\n<tool_call>\\n{\"name\": \"' }}\n {{- tool_call.name }}\n {{- '\", \"arguments\": ' }}\n {{- tool_call.arguments | tojson }}\n {{- '}\\n</tool_call>' }}\n {%- endfor %}\n {{- '<|im_end|>\\n' }}\n {%- elif message.role == \"tool\" %}\n {%- if (loop.index0 == 0) or (messages[loop.index0 - 1].role != \"tool\") %}\n {{- '<|im_start|>user' }}\n {%- endif %}\n {{- '\\n<tool_response>\\n' }}\n {{- message.content }}\n {{- '\\n</tool_response>' }}\n {%- if loop.last or (messages[loop.index0 + 1].role != \"tool\") %}\n {{- '<|im_end|>\\n' }}\n {%- endif %}\n {%- endif %}\n{%- endfor %}\n{%- if add_generation_prompt %}\n {{- '<|im_start|>assistant\\n' }}\n{%- endif %}\n",

|

||||

"clean_up_tokenization_spaces": false,

|

||||

"eos_token": "<|im_end|>",

|

||||

"errors": "replace",

|

||||

"model_max_length": 131072,

|

||||

"pad_token": "<|PAD_TOKEN|>",

|

||||

"padding_side": "left",

|

||||

"split_special_tokens": false,

|

||||

"tokenizer_class": "Qwen2Tokenizer",

|

||||

"unk_token": null

|

||||

}

|

||||

1

vocab.json

Normal file

1

vocab.json

Normal file

File diff suppressed because one or more lines are too long

Reference in New Issue

Block a user