Model: rhaymison/Mistral-portuguese-luana-7b Source: Original Platform

language, license, library_name, tags, base_model, datasets, pipeline_tag, model-index

| language | license | library_name | tags | base_model | datasets | pipeline_tag | model-index | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

apache-2.0 | transformers |

|

mistralai/Mistral-7B-Instruct-v0.2 |

|

text-generation |

|

Mistral-portuguese-luana-7b

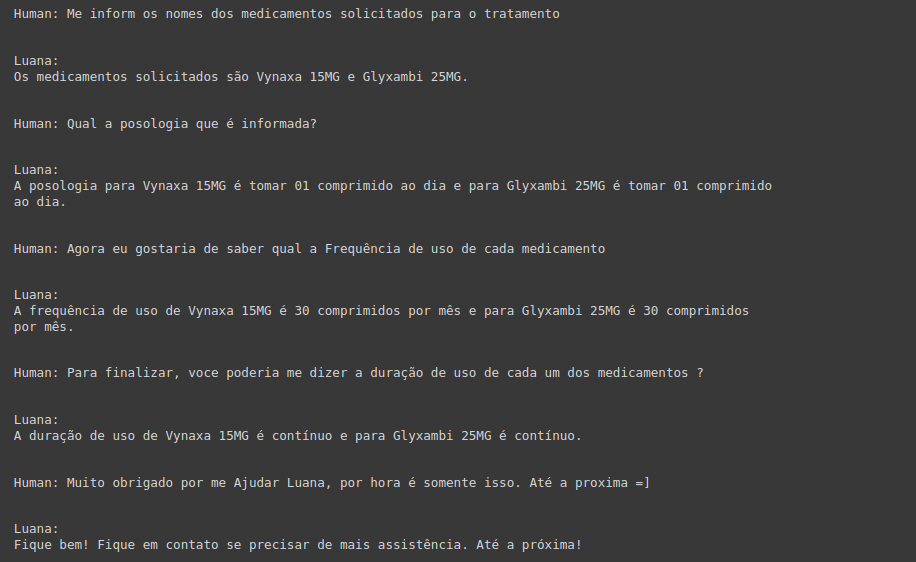

This model was trained with a superset of 200,000 instructions in Portuguese. The model comes to help fill the gap in models in Portuguese. Tuned from the Mistral 7b, the model was adjusted mainly for instructional tasks.

If you are looking for enhanced compatibility, the Luana model also has a GGUF family that can be run with LlamaCpp. You can explore the GGUF models starting with the one below:

Explore this and other models to find the best fit for your needs!

How to use

FULL MODEL : A100

HALF MODEL: L4

8bit or 4bit : T4 or V100

You can use the model in its normal form up to 4-bit quantization. Below we will use both approaches. Remember that verbs are important in your prompt. Tell your model how to act or behave so that you can guide them along the path of their response. Important points like these help models (even smaller models like 7b) to perform much better.

!pip install -q -U transformers

!pip install -q -U accelerate

!pip install -q -U bitsandbytes

from transformers import AutoModelForCausalLM, AutoTokenizer, TextStreamer

model = AutoModelForCausalLM.from_pretrained("rhaymison/Mistral-portuguese-luana-7b", device_map= {"": 0})

tokenizer = AutoTokenizer.from_pretrained("rhaymison/Mistral-portuguese-luana-7b")

model.eval()

You can use with Pipeline but in this example i will use such as Streaming

inputs = tokenizer([f"""<s>[INST] Abaixo está uma instrução que descreve uma tarefa, juntamente com uma entrada que fornece mais contexto.

Escreva uma resposta que complete adequadamente o pedido.

### instrução: aja como um professor de matemática e me explique porque 2 + 2 = 4.

[/INST]"""], return_tensors="pt")

inputs.to(model.device)

streamer = TextStreamer(tokenizer, skip_prompt=True, skip_special_tokens=True)

_ = model.generate(**inputs, streamer=streamer, max_new_tokens=200)

If you are having a memory problem such as "CUDA Out of memory", you should use 4-bit or 8-bit quantization. For the complete model in colab you will need the A100. If you want to use 4bits or 8bits, T4 or L4 will already solve the problem.

4bits example

from transformers import BitsAndBytesConfig

import torch

nb_4bit_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_quant_type="nf4",

bnb_4bit_compute_dtype=torch.bfloat16,

bnb_4bit_use_double_quant=True

)

model = AutoModelForCausalLM.from_pretrained(

base_model,

quantization_config=bnb_config,

device_map={"": 0}

)

LangChain

Open Portuguese LLM Leaderboard Evaluation Results

Detailed results can be found here

| Metric | Value |

|---|---|

| Average | 64.27 |

| ENEM Challenge (No Images) | 58.64 |

| BLUEX (No Images) | 47.98 |

| OAB Exams | 38.82 |

| Assin2 RTE | 90.63 |

| Assin2 STS | 75.81 |

| FaQuAD NLI | 57.79 |

| HateBR Binary | 77.24 |

| PT Hate Speech Binary | 68.50 |

| tweetSentBR | 63 |

Comments

Any idea, help or report will always be welcome.

email: rhaymisoncristian@gmail.com